Nutrition has an impact on human growth at every stage of the life cycle, and few aspects of the human environment have changed as much in the last 10,000 years as diet. It is therefore not surprising that there have also been changes in growth rates and outcomes. During pregnancy, for example, a woman’s diet can have a profound effect on the development of her fetus and the eventual health of the child. Moreover, the effects are transgenerational, because a woman’s own supply of eggs is developed during her own fetal development. So if a woman is malnourished during pregnancy, the eggs that develop in her female fetus may be damaged in a way that affects her future grandchildren’s health. And even if a baby girl whose mother was malnourished during pregnancy is well nourished from birth on (as often happens in adoptions), her growth, health, and future pregnancies appear to be compromised—a legacy that may extend for several generations (Kuzawa, 2005). Furthermore, nutritional stress during pregnancy commonly results in low-birth-weight babies, which are at great risk for developing hypertension, cardiovascular disease, and diabetes later in life (Barker, 1994; Gluckman and Hanson, 2005). Low-birth-weight babies are particularly at risk if they are born into a world of abundant food resources (especially cheap fast food) and gain weight rapidly in childhood (Kuzawa, 2005, 2008). These findings have clear implications for public health efforts that attempt to provide adequate nutritional support to pregnant women and infants throughout the world.

Nutrients needed for growth, development, and body maintenance include proteins, carbohydrates, lipids (fats), vitamins, and minerals. The specific amount that we need of each of these nutrients coevolved with the types of foods that were available to our ancestors throughout our evolutionary history. For example, the specific pattern of amino acids required in human nutrition (the essential amino acids) reflects an ancestral diet high in animal protein. We share with many other primates a dependence on dietary sources of organic nutrients such as vitamin C, reflecting a long history of consumption of fruits and other plant parts. In other words, our need for vitamin C coevolved with a diet high in that nutrient. Unfortunately for modern humans, these coevolved nutritional requirements are often incompatible with the foods that are available and typically consumed today. To understand this mismatch of our nutritional needs and contemporary diets, we need to examine the impact of agriculture on human evolutionary history.

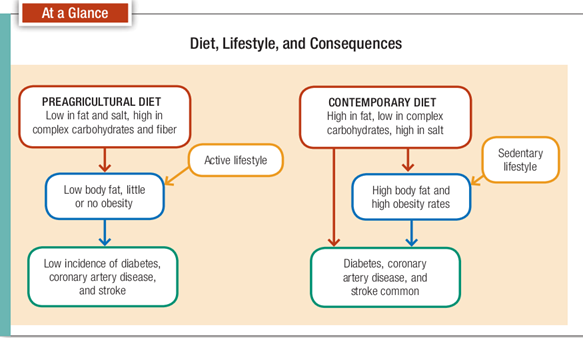

The preagricultural diet, basically encompassing the entirety of our evolutionary history prior to 10,000 years ago, was typically high in animal protein, but it was probably low in fats, particularly saturated fats. That diet was also most likely high in complex carbohydrates (including fiber), low in salt, and high in calcium. We don’t need to be reminded that the contemporary diet typifying many industrialized societies has the opposite configuration of the one just described. It’s high in saturated fats and salt and low in complex carbohydrates, fiber, and calcium (Fig. 16-4). There’s very good evidence that many of today’s diseases in industrialized countries are related to the lack of fit between our diet today and the one with which we evolved (Gluckman et al., 2009).

Along with agriculture and animal domestication came a number of “new” food types that are important and common today but were rare or nonexistent in ancestral diets. Two examples include dairy products and cereal grains. In a previous chapter we discussed the difficulty that some people have digesting dairy products because they lack the enzyme necessary for breaking down the milk sugar lactose. Others have difficulty digesting the gluten found in some cereal grains, most commonly wheat and its close relatives. Both lactose intolerance and gluten intolerance are more common in populations that have only recently adopted milk products and cereal grains into their diets (Wiley, 2008). The introduction of cattle and grains into early agricultural populations may have increased food availability for many people, but for some people, specific foods that were not part of their ancestral diets are mismatched with their bodies in ways that lead to diarrhea and gastrointestinal upset. Unfortunately, food aid programs originating in parts of the world where milk and grains are staples sometimes have negative impacts on the malnourished populations that they target.

Although we might reasonably expect that nutrition and health would have improved with the development of agriculture, human health actually declined in most parts of the world beginning about 10,000 years ago. Some have referred to the changing patterns of disease that occurred with the development of agriculture as an “epidemiological transition,” marked by the rise of infectious and nutritional deficiency diseases. In many places, skeletal signs of malnutrition (for example, iron deficiency anemia) (Fig. 16-5) appear for the first time with domesticated crops like corn (Cohen and Armelagos, 1984; Larsen, 2002). Life expectancy also appears to have dropped. Clark Larsen refers to the adoption of agriculture as an “environmental catastrophe” (Larsen, 2006), and Jared Diamond has called it the worst mistake humans ever made (Diamond, 1987). But whether for better or worse, we’re stuck with agriculture as a way of acquiring food because without agriculture the planet couldn’t possibly support the billions that live on it today.

It’s clear that both deficiencies and excesses of nutrients can cause health problems and interfere with childhood growth. Certainly many people in all parts of the world, both industrialized and developing, suffer from inadequate supplies of food of any quality. We read daily of thousands dying from starvation due to drought, warfare, or political instability. The blame must be placed not only on the narrowed food base that resulted from the emergence of agriculture but also on the increase in human population that occurred when people began settling in permanent villages and having more children. Today, the crush of billions of humans almost completely dependent on cereal grains (Cordain, 1999) means that millions face undernutrition, malnutrition, and even starvation. Even with these huge populations, however, food scarcity may not be as big a problem as food inequality. In other words, there may be enough food produced for all the people on earth, but economic and political forces keep it from reaching those who need it most. Of increasing concern are the effects of globalization (including liberalization of trade and agricultural policies) on food security, especially in developing nations, and what has become known as the Global South. In particular, the adoption of Western diets and lifestyles has contributed to declining health in much of the world (Young, 2004).

Thirty years ago, the primary focus of international health and nutrition organizations, including the WHO, was undernutrtion and infectious diseases (Prentice, 2006). Today, more and more attention is focused on overnutrition and the diseases and disorders associated with obesity. By 2006, the number of people in the world who were overweight exceeded the number who were malnourished and underweight (Popkin, 2007). In many countries, including the United States, more than half of the population are overweight or obese; Mexico has the highest rate, at almost 70%, with the United States not far behind . Clearly diets for many people are mismatched with the nutrients required for healthy bodies.

In summary, our nutritional adaptations were shaped in environments that included times of scarcity alternating with times of abundance. The variety of foods consumed was so great that nutritional deficiency diseases were rare. Small amounts of animal foods were probably an important part of the diet in many parts of the world. In northern latitudes, after about 1 mya, meat was an important part of the diet. But because meat from wild animals is low in saturated fats, the negative effects of high meat intake that we see today were rare. Our diet today is often incompatible with the adaptations that evolved in the millions of years preceding the development of agriculture. The consequences of that incompatibility include both starvation and obesity (Fig. 16-6).

Case Study : Diabetes

Perhaps no disorder is as clearly linked with dietary and lifestyle behaviors as diabetes. There are actually two different diseases referred to as diabetes. One,

the less common, is type 1 diabetes, also called insulin-dependent diabetes mellitus (IDDM), or juvenile-onset diabetes, which occurs when the immune system interferes with the body’s ability to produce the insulin that converts sugars (glucose) to energy. This type of diabetes is usually first recognized in childhood and requires lifelong insulin injections to avoid cell damage and death. It’s unlikely that children with type 1 diabetes lived very long in the past. Type 2 diabetes, also called noninsulin-dependent diabetes mellitus (NIDDM), is far more common today and has to do with the way insulin is used in the body. Sometimes this is described as “insulin resistance” in that sufficient insulin may be produced but the cells are not able to utilize it. This inability results in a buildup of glucose in the bloodstream that can cause a number of complications of the cardiovascular system, kidneys, and nervous system. If it is not treated—usually with dietary changes, weight control, and exercise—type 2 diabetes can also result in early death. A few years ago, type 2 diabetes was something that happened to older people living primarily in the developed world. Sadly, this is no longer true. The World Diabetes Foundation estimates that 80 percent

of the new cases of type 2 diabetes that appear between now and 2025 will be seen in developing nations, and the World Health Organization (WHO) predicts that more than 70 percent of all diabetes cases in the world will be in developing nations in 2025. Furthermore, type 2 diabetes is now seen in children as young as 4 years of age (Pavkov et al., 2006), and the mean age of diagnosis in the United States dropped from 52 to 46 between 1988 and 2000 (Koopman et al., 2005). In fact, it is likely that almost everyone reading this book has a friend or family member who has diabetes. What’s happened to make this former “disease of old age” and “disease of civilization” reach what some have described as epidemic proportions?

Although there appears to be a genetic link (type 2 diabetes tends to run in families), most fingers point to lifestyle factors. Two lifestyle factors that have been implicated in this epidemic are poor diet and inadequate exercise. Noting that our current diets and activity levels are very different from those of our ancestors, proponents of evolutionary medicine suggest that diabetes is the price we pay for consuming excessive sugars and other refined carbohydrates while spending our days infront of the TV set or computer monitor. The reason that the incidence of diabetes is increasing in developing nations is that these bad habits are spreading to those nations. In fact, we may soon see what can be called an “epidemiological collision” in countries such as Zimbabwe, Ecuador, and

Haiti, where malnutrition and infectious diseases are rampant but obesity is on the rise, so that people are dying not only from diseases of poverty but also from those more characteristic of wealthier populations (Trevathan, 2010).