▶ Explain where the first inhabitants of the New World came from and when they arrived.

▶ Identify and contrast the major cultural changes that accompanied the end of the Ice Age in the Americas and the Old World.

▶ Explain why it is unlikely that Paleo-Indian hunters caused widespread extinctions of Pleistocene megafauna at the end of the Ice Age.

- What are the specific biological and cultural clues that point to an Asian ancestry for the earliest American populations? How convincing do you find the evidence?

- What are some of the significant environmental changes associated with the end of the Pleistocene? Which of these changes would have most affected humans? Do you see parallels with climate changes today?

- Were Paleo-Indians primarily responsible for the extinction of Pleistocene animals? What archaeological evidence supports your view?

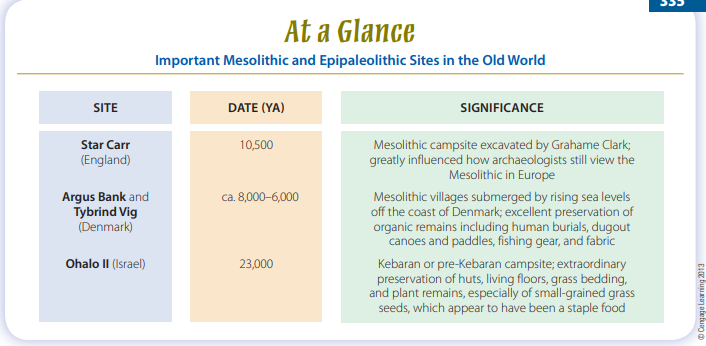

- Contrast Mesolithic cultural adaptations of northern Europe, as represented at Star Carr and Argus Bank, with those of the Near East, as represented by the Kebarans and Natufians in the Levant. What important factors explain the differences between Mesolithic lifeways in northern Europe and the Levant?

- In what ways are foragers different from collectors? What are some of the economic and biocultural implications of each of these subsistence strategies?

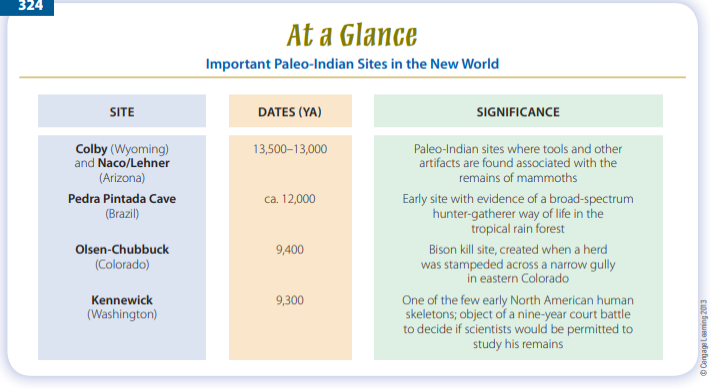

During the summer of 1996, two men found a human skull and other bones along the muddy shore of the Columbia River near Kennewick, Washington. They reported the discovery to the police and coroner, who in turn asked James Chatters, a forensic anthropologist and archaeologist, to examine the remains and provide an initial assessment. The results suggested that they were probably dealing with a Caucasian male in his mid-40s, but one who looked thousands of years old. When

a CAT scan also showed a large stone spear point embedded in the man’s hip, Chatters and others understandably wondered just how old this skeleton was (Chatters, 2001). Bone samples sent for radiocarbon dating returned an early Holocene age estimate of roughly 9,300 ya. Instead of explaining this man’s past, the analyses just added to the mystery. Who was this guy?

“Kennewick Man,” as he was soon called, became the center of an extraordinary controversy, one that was more legal than scientific; it took nine years and more than $8.5 million of taxpayers’ money to sort out the case in federal courts (Dalton, 2005). It involved a swarm of attorneys, the U.S. Army Corps of Engineers, the U.S. Department of the Interior, several Native American tribal groups, a handful of internationally known anthropologists, a Polynesian chief, and several federal judges, decisions, and appeals. Several important legal questions were ultimately at issue, not the least of which was the right of

the American public to information about the distant past (Bruning, 2006; Malik, 2007). At stake on the scientific side of the picture was what could be learned from the physical remains of a person who lived during the early days of the human presence in North America, when few people were spread over this huge continent. So few human skeletons (currently less than 10 individuals) are known in North America from this period that each new one, like Kennewick Man, is a major discovery

that potentially opens a fresh window on the prehistory of the continent.

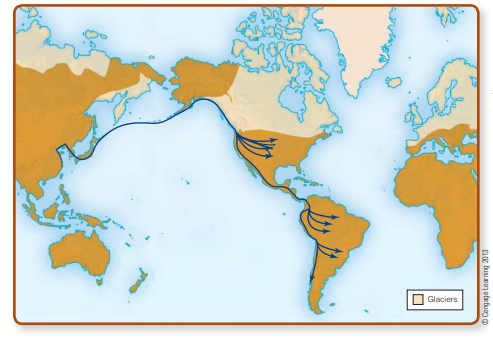

As we noted in Chapter 12, men, women, and children made the first footprints in New World mud sometime between 30,000 and 13,500 ya. The genetic evidence suggests that this most likely happened after about 16,500 ya (Goebel et al., 2008). With their first steps, they expanded the potential range of our species by more than 16 million square miles, spanning two continents and countless islands, or roughly 30 percent of the earth’s land surface. It was a big deal, comparable in scope and significance to the dispersal of the first hominins into Europe and Asia from Africa during the Lower Paleolithic.

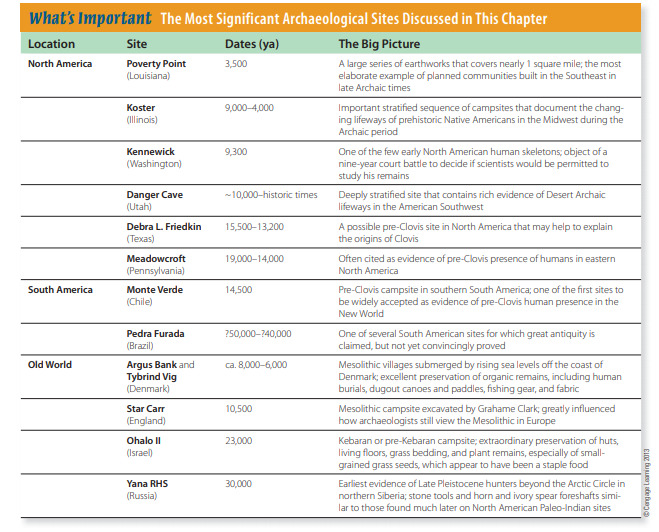

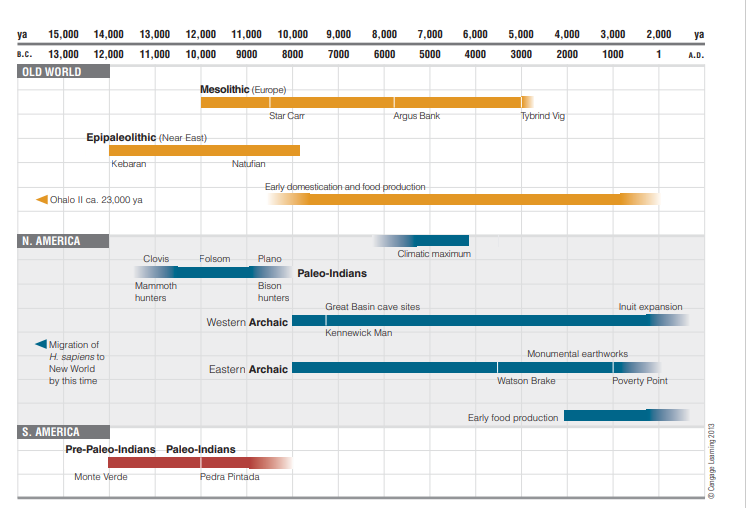

In this chapter, we’ll consider the story of these first New World inhabitants and also begin to look at the major cultural developments of roughly the past 10,000 years (Fig. 13-1), during which world cultural changes increased at a dramatic rate. Following the end of the last Ice Age, the world’s modest human population of perhaps a few tens of millions sustained itself by hunting and gathering food, living in small groups, constructing humble shelters, and making use of effective but simple

equipment crafted from basic natural materials. Obviously, quite a lot has happened since then! What is important to note is that these changes are not primarily the result of human physical evolution, which has played a decreasing role in human changes during the short span since the end of the Pleistocene (see Chapters 4 and 5). Rather, the most radical developments affecting the human condition continue to be the consequences of our uniquely human biocultural evolution, mostly stimulated by cultural innovations and the inescapable effects of our ever-growing population.

Let’s begin our look at human communities at the end of the Ice Age by examining the archaeological and biological clues relating to the origins of the first Americans and review the evidence indicating not only when they first arrived but also what cultural adjustments they made in their new homeland. We’ll then explore how lifeways changed for human groups both in the New and Old Worlds as the glaciers retreated, sea levels rose, plant and animal communities migrated, and even such seemingly permanent entities as rivers and lakes became transformed in a postglacial world.

Entering the New World

Major archaeological problems, such as the entry of the first humans into the New World, inevitably attract a lot of research interest, not to mention a little contentious debate (e.g., Waguespack, 2007). When did the first humans arrive in the Americas? Where did they come from? How did they get here? How did they make a living after they arrived? We want firm answers to these questions, but, as with all research that centers on “first” events, finding the answers is never easy. Consider, for example, the crucial question of when the first humans arrived in the New World.

Strictly speaking, to answer this question we need to identify the locality —somewhere in the 16 million square miles of two continents—where the oldest material evidence of the presence of humans is preserved in well-dated archaeological contexts. It’s as though we’re trying to find a particular sand grain that may or may not be on a beach.

Once you understand the difficulty of finding “the” answer, it’s easy to see why the question is likely to remain with us for a while. When people arrived is necessarily tied up with where they came from because the two, taken together, tell us where to search in the archaeological record for these first New World immigrants. There’s general consensus that the Late Pleistocene marked something of a watershed in human prehistory.

For millions of years, continental glaciers waxed and waned while humans evolved from ancestral primates. This activity meant little to our remote ancestors because glaciation primarily affected the higher latitudes, and early hominins lived in the tropics,

where relatively few effects of such climatic changes reached them. But by Late Pleistocene times, during the Upper Paleolithic of Europe and the Late Stone Age of Africa, modern humans had long ago pushed well into the temperate latitudes and even into regions just exposed by glacial meltwaters (see Chapter 12).

These humans were capable of culturally adapting to changing natural and social environments and could do so at a pace that would have been unthinkable to their Lower Paleolithic ancestors. Members of these human groups became the first New World immigrants.

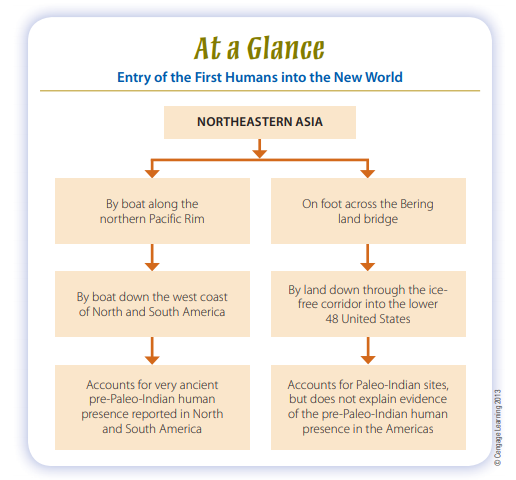

Archaeologists depend on geographical, biological, archaeological, and linguistic evidence to trace the earliest Americans back to their Old World origins and construct today’s answers to the when and where questions. Right now, there are two major hypotheses to explain the route of entry of humans into the New World: first, by way of the Bering land bridge that connected Asia and North America several times during the Late Pleistocene (Fig. 13-2), and second, along the coast of the northern Pacific Rim (Fig. 13-3). It’s also possible that both routes played important roles in the populating of the New World, but

let’s keep things simple and consider the evidence for each hypothesis in turn.

Bering Land Bridge

As long ago as the late sixteenth century, José de Acosta, a Jesuit priest with extensive experience in Mexico and Andean South America, examined the geographical and other information available to him and concluded that humans must have entered the New World from Asia (Acosta, 2002). A lot of evidence favors this idea. First, there’s the basic geography of the situation. If you cast around for a feasible, low-tech way to get people into the Americas, a quick check of a world map will draw your eyes to the Bering Strait, where northeastern Asia and northwestern North America are separated by only 50 miles of ocean (see Fig 13-2). An equally quick visit to the geology section of your local library will also reveal that there were several long intervals during the Pleistocene when lowered sea levels actually exposed the floor of the shallow Bering Sea, creating

a wide “land bridge” (West and West, 1996). The land bridge formed during periods of maximum glaciation, when the volume of water locked up in glacial ice sheets reduced worldwide sea levels by 300 to 400 feet.† During the Last Glacial Maximum (28,000–15,000 ya), Beringia, as it is known, comprised a broad plain up to 1,300 miles wide from north to south (see Fig. 13-2).

Ironically, the cold, dry Arctic climate kept Beringia relatively ice-free. Its plant cover included dry grasslands (Zazula et al., 2003), as well as patches, or refugia, of boreal trees, shrubs, mosses, and lichens (Brubaker et al., 2005).

Beringia’s dry steppes and tundra supported herds of grazing animals and could just as easily have supported human hunters who preyed on them (Pitblado, 2011). The region was, after all, an extension of the familiar landscape of northeastern Asia. Archaeology confirms that humans were well adapted to the cold and dry conditions of the Late Pleistocene in Siberia, including during the harsh conditions of the Last Glacial Maximum (Kuzmin, 2008). Upper Paleolithic hunters pursuing large herbivores with efficient stone- and bonetipped weapons, and probably with the aid of domesticated dogs, penetrated into

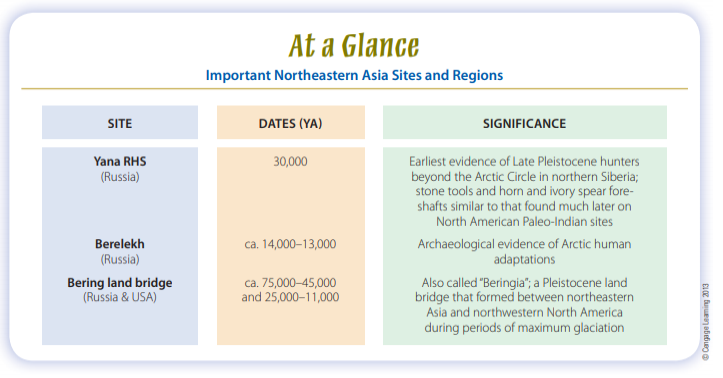

the farthest reaches of Eurasia (Soffer and Praslov, 1993). The earliest evidence of their presence in northern Siberia is the recently reported Yana RHS site (Fig. 13-4), which lies well within the Arctic Circle. Here archaeologists have found rhinoceros horn and mammoth ivory spear foreshafts as well as stone tools and other artifacts in contexts dated to about 30,000 ya (Pitulko et al., 2004).

Yana RHS is at least twice as old as the western Beringia site of Berelekh (see Fig. 13-4), the next oldest candidate for a possible Arctic Circle campsite (Pitulko, 2011), and it demonstrates that at a very early date, people were successfully adapted to high-latitude conditions similar to what hunters would have encountered in crossing Beringia. Culturally and geographically, these Asian hunters were capable of becoming the first Americans (Goebel et al., 2008).

Geologists have determined that except for short spans, the Bering passage was dry land between about 25,000 and 11,000 ya and for other extended periods even before then (especially between 75,000 and 45,000 ya). If the first humans entered the New World by traveling on foot across Beringia, they probably made their trips during the later episode. As yet, there’s no generally accepted evidence of humans in the Americas before that time.

After entering Alaska by way of Beringia, early immigrants would not have had easy access to the rest of the Americas. Major glaciers to the southeast of Beringia blocked the further movement of both game and people throughout most of the Pleistocene epoch. The Cordilleran ice mass covered the mountains of western Canada and southern Alaska, and the Laurentide glacier, centered on Hudson Bay, spread a vast ice sheet across much of eastern and central Canada and the northeastern United States. Around 20,000 ya, these glaciers coalesced into one massive flow. Toward the close of the Pleistocene, the edges of the Cordilleran and Laurentide glaciers finally separated, allowing animals and, in principle,

human hunter-gatherers to gain entry to the south through an “ice-free corridor” along the eastern flank of the Canadian

Rockies. Some researchers argue that the ice-free corridor became passable as early as 13,700–13,400 ya (Haynes, 2005). Others point to the geographical pattern of radiocarbon age estimates from western North America that indicate that humans could not have traveled through this ice-free corridor before about 13,400 ya (Arnold, 2006).

For a long time, most archaeologists accepted the ice-free corridor hypothesis because it explained the entry of the earliest humans into the Americas as well as the origins of the Clovis complex (13,500–13,000 ya), which, until recently, was widely believed to be the earliest archaeological evidence of humans in North America. The nagging doubts of critics centered mostly on the lack of any clear antecedents for the sophisticated Clovis stone tool technology in the Upper Paleolithic sites of Siberia. In the long run, it may not matter much who’s right because the earliest dates for an icefree corridor currently fall after the earliest archaeological evidence of humans in the Americas south of the glaciers.

To sum things up, the Bering land bridge hypothesis for the entry of people into the New World rests on several key notions. First, the technologically simplest way to get from the Old World to the New World was on foot. Second, lots of other animals arrived by this route during the Pleistocene, so, archaeologists have long reasoned, it was feasible for people to do the same. Third, as we discuss in a later section, the skeletal and genetic evidence clearly favor an Asian origin for Native Americans.

On all these points, at least, there’s little debate. The main problem with the Bering land bridge explanation is that the age estimates for the earliest humans in North and South America are hard to reconcile with the age estimates for the most favorable intervals during which humans could have crossed Beringia, walked through the ice-free corridor, and populated the Americas. The archaeological evidence currently supports the inference that humans passed the glaciers and established themselves on both continents before, not after, 13,500 ya.

Pacific Coastal Route

The second scenario also envisions the earliest New World inhabitants coming from Asia. But it has them moving along the Pacific coast, where climatic conditions, beginning about 17,000–15,000 ya, were generally not as harsh as those of the interior and where they could simply go around the North American glaciers. Looking again at the northern Pacific Rim between Asia and North America (see Fig. 13-3), we can see that given canoes, rafts, or other forms of water transport, it was geographically possible for humans to enter the New World by traveling along the coast. Unlike the Bering land bridge, this route would

have been less dependent on the waxing and waning of glaciers. In principle, therefore, humans traveling by this route could have arrived in the New World tens of thousands of years ago (Dixon, 2011; Erlandson, 2002).

But why should we assume that the Late Pleistocene inhabitants of East Asia had water transport capable of making this trip? Here, sound archaeological evidence comes to our rescue. As discussed in Chapter 12, humans colonized Australia roughly 50,000 ya, and it could only be reached by water. So, the rafts or boats necessary to carry humans successfully along the Pacific coast— but not, apparently, across the Pacific Ocean—from Asia to the New World must have existed and been used by at

least some Late Pleistocene populations before the first people began to settle in the Americas.

Passage along the Pacific Rim may have been eased by access to the region’s diverse marine and terrestrial ecosystems (Erlandson and Braje, 2011). For example, kelp (seaweed) forests provided a rich and diverse coastal ecosystem of fishes, mammals, and birds that were exploited by Native Americans well into the twentieth century. These marine forests, along with rich estuaries, coral reefs, and the like, thrived along the Pacific Rim by 16,000 ya (Erlandson and Braje, 2011; but see Davis, 2011) and would have been important resources for human migrants along the coast.

Many archaeologists find the possibility that people used a coastal route to enter the New World an attractive idea, partly because it avoids the time constraints on the availability of the Bering land bridge and ice-free corridor and partly because migrants traveling by boat along the northern Pacific coast need not have abandoned their boats once they got around the glaciers. They could just as easily have kept going down the west coast of the Americas, following the resource-rich “Pacific

Rim Highway” (Erlandson and Braje, 2011). Had they done so, it would help to explain why there are a lot of apparently very early South America sites, but few in the interior of North America (Kelly, 2003).

Still, the coastal route has several possible shortcomings. An often cited problem, one even noted by its proponents, is that the archaeological evidence that could be used to test this hypothesis rests at the bottom of the Pacific Ocean in sites that were covered, if not destroyed, by rising sea levels as the glaciers melted. Fortunately, coastlines react locally, not globally, to such changes.

Paleogeographical researchers are beginning to identify specific parts of the modern coasts and offshore islands of Canada that would have been exposed land where human migrants may have traveled (Hetherington et al., 2003). Farther to the south, off the California coast, 13,000-year-old human skeletal remains were recently found in excavations on Santa Rosa Island (Johnson et al., 2007). What makes the Santa Rosa remains particularly relevant is that humans could not have reached this

island without some form of water transport. Taken together, these examples show that relevant archaeological evidence is indeed discoverable, even if we cannot (as yet) adequately explore the ocean floor.

Another problem, possibly related to the preceding one, is that we currently have little archaeological evidence of marine-adapted human populations along the coast of northeastern Asia— from which the earliest immigrants into the New World would have been drawn—until after the end of the last Ice Age (Erlandson and Braje, 2011). As on the other side of the Pacific, much of the archaeological evidence of marine adaptations may have been buried by rising sea levels at the end of the Pleistocene.

Most archaeologists with firsthand experience in the region feel that if immigrants to the New World had arrived by the coastal route, they must have had the necessary technology and Arctic marine experience needed to survive in this harsh, quickly changing environment. So to sum things up, the Pacific coastal route was feasible in principle. Considering the generally milder climate and rich resources of the coast relative to the interior, the presence of natural refugia, and the apparent technological capabilities of Late Pleistocene populations, the earliest migrants could have pulled it off. Factoring the Bering land bridge and the glaciers out of the equation also removes the temporal bottlenecks on migration that haunt the land bridge hypothesis. These are definitely marks in its favor. The main problem is that if the earliest people came by this route, then much—but hopefully not all—of the most relevant archaeological evidence is buried in the muck on the floor of the Pacific from northeastern Asia to the Americas. Even with this drawback, the coastal route hypothesis is perhaps the most promising explanation of how the first inhabitants arrived in the New World.

The Earliest Americans

We’ll now leave behind the question of how the first people arrived in North America and explore some ideas about who they were.

Physical and Genetic Evidence Biological data bearing directly on the earliest people to reach the New World are frustratingly scarce. Well documented skeletons are especially rare. The physical remains of fewer than two dozen North American individuals appear to date much before 9,500 ya, by which time humans had certainly been present in the New World for millennia (Fig. 13-5). The tremendous information value of well-preserved and documented early skeletons is the reason archaeologists spent nine years in federal courts contesting what they believed to be the government’s well-intentioned but misguided decision to turn over the remains of Kennewick Man to Native American tribal groups for reburial before the

remains could be studied.

The rare early finds provide valuable insights on ancient life and death. What little we currently know about Kennewick Man (Fig. 13-6), for example, is that he suffered multiple violent trauma and other health problems during his 40 to 50 years of life. His most intriguing injury is an old wound in his pelvis that had healed around a still-embedded stone spear point. To this we can add the largely healed effects of massive blunt trauma to the chest, a depressed skull fracture, and a fracture of the left arm.

He also had an infected head injury and a fractured shoulder blade (scapula), neither of which shows the effects of healing, as well as osteoarthritis (Chatters, 2004). What’s more, the list is probably incomplete because it was compiled from a preliminary examination of the remains. But by any measure, this man had a pretty hard life.

Just how hard it was will become evident in a couple of years, after researchers complete the scientific analysis of his remains.

Anthropologists are also analyzing other cases, all from the American West. The 12,800-year-old partial skeleton of a young woman, discovered in a cave above the Snake River near Buhl, Idaho, reveals signs of interrupted growth in both her long bones and her teeth; this evidence suggests that the woman experienced some metabolic stress in childhood, possibly because of disease or seasonal food shortages (Green et al., 1998).

At Spirit Cave, Nevada, researchers discovered the partially desiccated body of a man, wrapped in fine matting, who was in his early 40s when he died some 10,600 years ago; his skeleton exhibits a fractured skull and tooth abscesses as well as signs of back problems (Winslow and Wedding, 1997). Near the Grimes Point rock-shelter, another Nevada site, researchers found a teenager from about the same period who died of his wounds after being stabbed in the chest with an obsidian blade that left slash marks and stone flakes embedded in one of his ribs (Owsley and Jantz, 2000).

Physical anthropologists who made the preliminary studies of Kennewick Man, the Spirit Cave mummy, and other early specimens announced surprising interpretations based on this modest sample. Statistical analyses comparing a series of standard cranial measurements—including those that define overall skull size and proportion, shape of nasal opening, face width, and distance between the eyes—place the earliest-known American remains outside the normal range of variation

observed in modern Native American populations (Owsley and Jantz, 2000).

The more derived craniofacial morphological traits seen in most modern Asian and Native American populations appear to be absent in this early population. Instead, the archaeological examples display relatively small, narrow faces combined with long skulls. Physical anthropologists note these generalized (nonderived) traits in living populations among the Ainu of Japan and some Pacific Islanders and Australians. Crania that more closely resemble those of modern Native Americans became prevalent

only after about 7,000 ya (Owsley and Jantz, 2000; Steele, 2000).

There are, of course, no uniform biological “types” of human beings in the sense once assumed by traditional classifiers of race (see Chapter 4). Generalities regarding human variation must, considering the nature of genetic recombination and the effects of environment and nutrition, be taken simply as that— generalities. Still, the lack of distinctive Native American physical markers on the oldest skeletal specimens has stimulated research on how the early population of the Americas, as represented by these individuals, may be related to contemporary populations.

Neves and colleagues (2004) compared the cranial morphology of nine individuals from central Brazil dated to around 10,200 ya. The researchers concluded that these individuals represent one of possibly several populations that participated in the peopling of the Americas from Asia. The researchers argue that the differences between the cranial morphology of these individuals and that of modern Native American populations are consistent with those seen in morphological comparisons elsewhere in the Americas (e.g., Owsley and Jantz, 2000; Brace et al., 2001). Similar differences also exist between Late Pleistocene and recent populations in Asia, and the modern typical morphological pattern of Asia is likely a recent development that may have followed the adoption of agriculture (Jantz and Owsley, 2001).

Studies like these are a great help in placing recent finds such as Kennewick Man into context. When the facial reconstruction of Kennewick Man (see Fig. 13-6) was completed in 1997, more than a few people wondered why it looked more like the British actor Patrick Stewart of Star Trek fame than the popular stereotype of Native Americans (think of the man’s profile on the old U.S. buffalo nickel). Quite a lot was read into that reconstruction, but all it really showed was what researchers like

Neves, Jantz, Owsley, and others already knew: We don’t have much data to work from, but what we do have shows similar morphological variation in Asia and the Americas among Late Pleistocene– early Holocene skeletons. The data support Steele and Powell’s (1999) conclusions that there seems to have been more than one prehistoric migration from Asia into the New World. The argument has intuitive appeal. After all, why would the supply of immigrants dry up after the first humans arrived in the New World?

Looking at the entire span of the human presence in the New World, it seems evident that the flow of people to the New World has roughly kept pace with the development of technology to bring them here. In this view, at least, the peopling of the New World can best be viewed as a continuing process, not a one-time event. The particular physical traits shared by modern Native Americans are almost surely derived from a small founding gene pool and thus a product of founder effect. (For a discussion of founder effect, an example of genetic drift, see Chapter 3.) As in virtually all other areas of biological anthropology, molecular research is shedding new light on where the earliest Americans came from (O’Rourke and Raff, 2010). Recent analyses of mitochondrial DNA among contemporary Native American populations suggest that just four or five genetically related lineages contributed to the early peopling of the Americas and that these groups came from Asia (Tamm et al., 2007). Far more detailed molecular evidence showing geographical patterns in nuclear DNA support this view (Jacobsson et al., 2008; Li et al., 2008). While these molecular data show very clearly that early Americans share their closest genetic links with East Asian populations, particularly those from Siberia, it remains less certain what route early immigrants took once they reached North America.

More detailed analysis using nuclear DNA, which already has provided data on hundreds of thousands of genetic loci, may well provide more complete answers. Another intriguing source of genetic data that may provide insights has recently come from the Paisley Caves site in Oregon, where 14,000-year-old feces, or coprolites, yielded human hair and human DNA (Gilbert et al.,

2008). These new data support the coastal route hypothesis for the entry of humans into the continental United States well before the development of the Clovis complex and before the ice free corridor opened up.

Cultural Traces of the Earliest Americans

Much of the cultural evidence documenting the presence of the earliest Americans is no less controversial than that gained from analyses of the skeletal data. Nearly all archaeologists agree that Native Americans originated in northeastern Asia (yes, Kennewick Man’s ancestors, too); yet they have varying opinions about when people first arrived in the New World and the routes by which they traveled (see Meltzer, 2009). Most of the controversy focuses on the span between 30,000 and 13,200 ya. The earlier date represents the time by which modern people first began to appear in those parts of Asia closest to North

America—for example, the Yana RHS site in Siberia (see p. 311). And everyone agrees that the Paleo-Indian Clovis complex was present in North America by the later date.

Like the biological anthropologists, archaeologists have a tough time reaching consensus on such basic questions as: What are the material remains of the earliest inhabitants of the New World? When did they arrive? Where did they come from? That robust answers are not forthcoming isn’t for want of research;

it is simply the case that the evidence is sparsely scattered over millions of square miles and is not necessarily very distinctive. As biological anthropologists and archaeologists have learned, answering these questions takes decades of hard work—and more than a little luck.

What kind of archaeological evidence can we point to that has survived from this early period of American prehistory? The answer depends largely on how one evaluates the “evidence.” For example, isolated artifacts, including stone choppers and large flake tools of simple form, are at times recovered from exposed ground surfaces and other undatable contexts in the Americas. They are sometimes proposed as evidence of a period predating the use of bifacial projectile points, which were common in North America by 13,200 ya (see Meltzer, 2009, pp. 97–117). If typologically primitive-looking finds cannot be securely dated, most (but not all) archaeologists regard them with justifiable caution. The appearance of great age or crude condition may be misleading and is all but impossible to verify without

corroborating evidence. To be properly evaluated, an artifact must be unquestionably the product of human handiwork and must have been recovered from a geologically sealed and undisturbed context that can be dated reliably.

Sounds pretty straightforward, right? The reality of the situation is not that easy. Take, for example, the projectile points. Alan Bryan and Ruth Gruhn (2003) point out that North American archaeologists’ obsession with bifacially flaked stone points may ultimately do more harm than good to research,

because we have no basis for believing that such tools were a consistent part of prehistoric assemblages everywhere in the Americas. They note, for example, that bifacially flaked projectile points are

far less common in Central and South America than they are in the continental United States and southern Canada. In fact, in some parts of lowland South America, Native American groups never

did use bifacially worked stone tool technology (Bryan and Gruhn, 2003, p. 175).

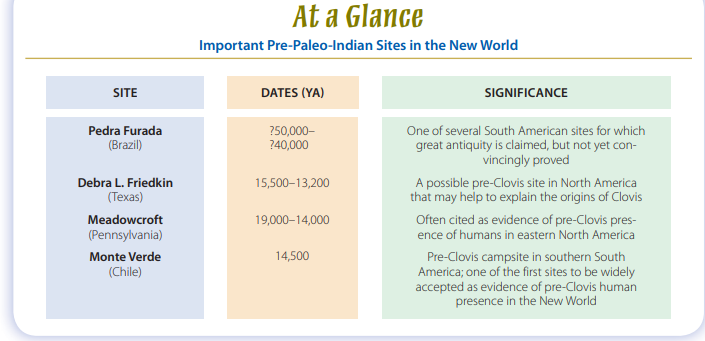

The implication is obvious: The criteria that work for identifying the earliest Americans in one part of the two continents may not apply to other parts. Most difficult to assess are some atypical sites that have been carefully excavated by researchers who sincerely believe that their work offers proof of great human antiquity in the New World. Currently, several South American sites fall into this disputed category (see Fig. 13-5). Pedra Furada is a rock-shelter in northeastern Brazil where excavators found what they claim

to be simple stone tools in association with charcoal hearths dating back 40,000 years (Guidon et al., 1996). Others who have examined these materials remain convinced that natural, rather than cultural, factors account for them (Lynch,1990; Meltzer et al., 1994). The dating of this and other sites in the same general region also continue to be reexamined. Additional radiocarbon age estimates on

samples from hearths in the lowest levels of Pedra Furada recently yielded dates in excess of 50,000 ya (Santos et al., 2003). However, fresh radiocarbon dates on Pedra Furada pigments and rock paintings, both of which are thought by their excavators to be nearly 30,000 years old, yielded estimates of only 3,500–2,000 ya (Rowe and Steelman, 2003). So, it could be the oldest site in South America—

or it could be so recent as to be irrelevant. Pedra Furad a joins several other Central and South American locations that in recent decades have been proposed as extremely early cultural sites (Meltzer, 2009). Archaeologists have been uncertain how to evaluate most of them because the associated cultural materials are so typologically diverse and their contexts are frequently secondary, or mixed, geological deposits. The United States, too, has its share of potentially early sites that defy easy explanation. The most intriguing recent example is the Debra L. Friedkin site in central Texas, where archaeologists have

found a large pre-Clovis assemblage of more than 15,000 artifacts, including bifaces, cores, flake tools, and stone toolmaking debris, stratigraphically beneath the remains of a Clovi assemblage (Pringle, 2011; Waters et al., 2011). The estimated age of the pre-Clovis assemblage, which is based on 18 optically stimulated luminescence (Rhodes, 2011) analyses of floodplain clays in which the artifacts were found, is between 15,500 and 13,200 ya, or before the beginning of Clovis. While it is too soon to say how

well this site and its dating will bear up under the intense scientific scrutiny to which it will be subjected over the next few years, it is a very promising North American case that may help begin to

explain the origins of Clovis and its relationship to the earliest inhabitants of North America.

Far to the east, at the Cactus Hill site along the Nottoway River in southern Virginia and at the Topper site in Allendale County, South Carolina, archaeologists have retrieved unusual assemblages of stone cores, flakes, and tools from strata lying well beneath more typical Paleo-Indian components (Dixon, 1999). The early radiocarbon dates (18,000–15,000 ya) associated with these materials are consistent with their stratigraphic position (e.g., Wagner and McAvoy, 2004), but, as with the Debra L. Friedkin site, the evidence will require cautious review before these sites are generally accepted.

The scientific method is not a democratic process; we can’t simply dismiss (or side with) unpopular positions without assessing the evidence as it is presented. The method is, however, a skeptical one; and the burden of proof to substantiate claims of great antiquity falls upon those who make them. Each

allegation requires critical evaluation, a process that the general public sometimes perceives as unnecessarily conservative or obstructive. Information regarding dating results, archaeological contexts, and whether or not materials are of cultural origin must all be scrutinized and accepted before intense debate can resolve into consensus.

This evaluation process may take years. Consider, for example, the case of the Meadowcroft Rock Shelter, near Pittsburgh, Pennsylvania. In a meticulous excavation over 25 years ago, archaeologists explored a deeply stratified site containing cultural levels dated at between 19,000 and 14,000 ya by standard radiocarbon methods (Adovasio et al., 1990). Stratum IIa, from which the earliest dates derive, contained several prismatic blades, a retouched flake, a biface, and a knifelike implement (Fig. 13-7). None of the tools from this deep stratum are particularly distinctive, and the final detailed excavation report on this important site has yet to be published, so it’s hard to assess technological relationships with other assemblages or, indeed, the full significance of Meadowcroft’s contribution to the scientific understanding of the early human presence in North America.

Excavations at Monte Verde, in southern Chile, revealed another remarkable site of apparent pre–Paleo-Indian age (Dillehay, 1989, 1997). Here, remnants of the wooden foundations of a dozen rectangular huts were arranged back to back in a parallel row. The structures measured between 10 and 15 feet on a side,

and animal hides may have covered their sapling frameworks. Apart from the main cluster, a separate wishboneshaped building contained stone tools and animal bones (Fig. 13-8). The cultural equipment comprised spheroids (possibly sling stones), flaked stone points, perforating tools, a wooden lance, digging sticks, mortars, and fire pits. Mastodon bones represented at least seven individuals, and remains of some 100 species of nuts, fruits, berries, wild tubers, and firewood testify to the major role of plants in subsistence activities at this site. Incredibly, preservation conditions at Monte Verde were such that researchers were able to identify nine species of seaweeds that had been brought from the coast to this inland site for use as food and medicine (Dillehay et al., 2008). Monte Verde’s greatest significance,

however, is simply its age, which is about 14,500 years old, with a few features possibly much older. The site’s age was hotly contested until 1997, when a “jury” of archaeological specialists reviewed the findings, visited the site, and finally agreed that the excavator’s claims for the pre-Clovis age of Monte Verde were substantiated. This was important because it meant that the mainstream of archaeological thought accepted that Monte Verde contained material evidence of a human presence in the New World more than a thousand years before the beginning of the Clovis complex around 13,200 ya in North

America. And, more generally, the acceptance of Monte Verde’s great antiquity implied that archaeologists had a lot to learn about the colonization of the New World because it had just gotten a lot

older (Grayson, 2004; Meltzer, 2004).

Paleo-Indians in the Americas

For much of the twentieth century, most archaeologists held the view that the Clovis complex marked the earliest material evidence for the entry of people into the Americas below the Canadian glaciers about 13,200 ya. These highly mobile Paleo-Indian hunter gatherers then spread rapidly across the United States (Fig. 13-9; Hamilton and Buchanan, 2007).

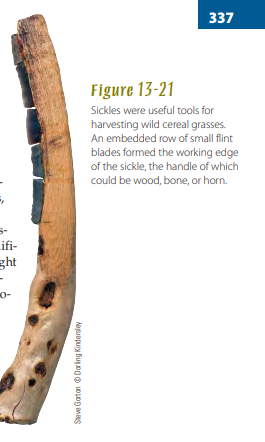

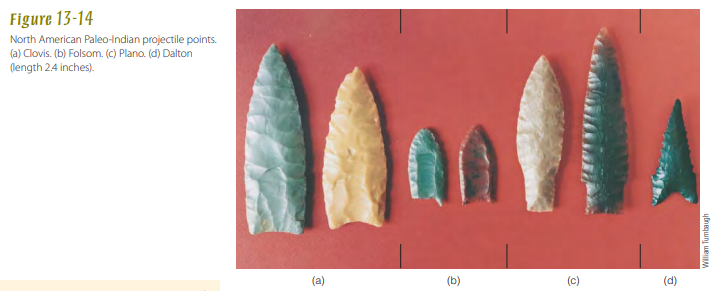

The distinctive fluted point became the Paleo-Indian period’s hallmark artifact, at least in North America. Each face of a fluted point typically displays a groove (or “flute”) resulting from the removal of a long channel flake, possibly to make it easier to use a special hafting technique for mounting the point on a shaft (Fig. 13-10). Other typical PaleoIndian artifacts include a variety of stone cutting and scraping tools and, less commonly, preserved bone rods and points (Gramly, 1992).

While the general Paleo-Indian tool kit from North America probably would have been familiar to Upper Paleolithic groups in northeastern Asia, no clear technological predecessors of fluted points have yet been identified in Asia.

It’s possible that fluted point technology is an American invention and there are no ancestral Asian forms to find. Sites such as Yana RHS, in Siberia, demonstrate that Upper Paleolithic huntergatherers, like Paleo-Indians, made bifacially worked stone tools and tipped their spears with projectile points— but not fluted projectile points. These Siberian hunters set projectile points in bone or ivory foreshafts and were living in the general region of Beringia more than 15,000 years before the earliest evidence of human presence appears in the New World. Bifacial points and knives are also part of the Nenana complex sites of central Alaska and the lower levels of Ushki Lake (see Fig. 13-4) in Siberia’s Kamchatka Peninsula. All of these sites are currently believed to date to around 13,400–13,000 ya (Goebel et al., 2003), which is too late for their inhabitants to have been the ancestors of the people who developed Paleo-Indian fluted point technology. Nevertheless, the sites do demonstrate that generally similar tool industries were moving into the New World at an early date.

Paleo-Indian Lifeways

Current research is chiseling away at the long-standing image of PaleoIndian uniformity in weaponry and hunting behavior and the rapid spread of Paleo-Indian populations across the Americas about 13,200 ya to reach as far south as Fell’s Cave in Patagonia in South America by some 11,000 ya (see Fig. 13-5). It was also long assumed that these rapidly moving Paleo-Indian hunters quickly made cultural adjustments as

they dispersed through the varied environments of the New World during the terminal Pleistocene and early Holocene.

The recent acceptance of Monte Verde and other sites as evidence of a human presence in the Americas before Clovis is stimulating a major reexamination of these and other interpretations of Paleo-Indian lifeways. One of the main shortcomings of these interpretations is the assumption that the fluted point technology was a consistent part of prehistoric assemblages across the Americas and that people everywhere were using the technology to do the same things. As Bryan and Gruhn (2003) point out,

there are certainly parts of the Americas in which these assumptions aren’t warranted. To the extent that this is true, the uniformity of Paleo-Indian tool kits may often be more the creation of archaeologists than of the archaeology.

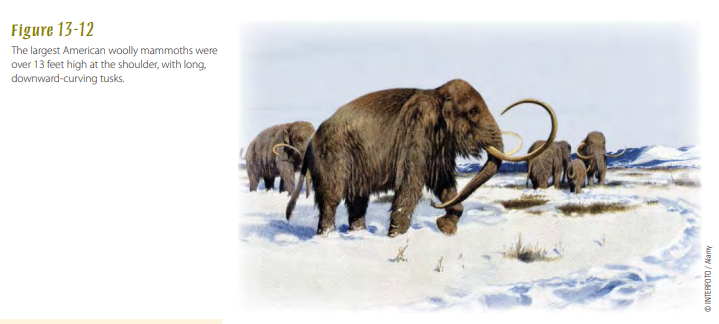

The same appears to be true of the longheld view of Paleo-Indians as specialized hunters of Pleistocene megafauna —animals over 100 pounds, including the mammoth, mastodon, giant bison, horse, camel, and ground sloth (Figs. 13-11 and 13-12). Of the sites associated with Paleo-Indians, the most impressive are undoubtedly the places where ancient hunters actually killed and butchered such megafauna as mammoth and giant bison (Fig. 13-13).

At many of these kill sites, knives, scrapers, and finely flaked and fluted projectile points are directly associated with the animal bones, all of which are convincing evidence that the megafauna were human prey. Even so, as Cannon and Meltzer (2004) recently discovered in a review of the faunal remains, taphonomy, excavation procedures, and archaeological features of 62 excavated early Paleo-Indian

sites in the United States, the data “provide little support for the idea that all, or even any, Early Paleo-Indian huntergatherers were megafaunal specialists” (Cannon and Meltzer, 2004, p. 1955). The

kinds of game they hunted and other foodstuffs they collected varied with the environmental diversity of the continent (Walker and Driskell, 2007), which, when you think about it, isn’t that surprising. So, in the end, yes, Paleo-Indian hunters killed megafauna, but was it an everyday event? Probably not.

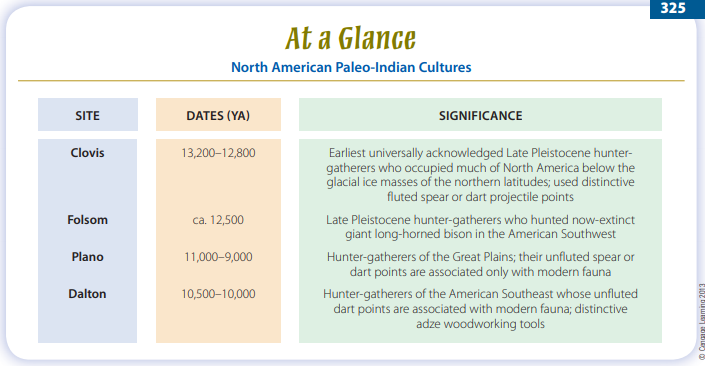

North American archaeologists have identified a sequence of PaleoIndian cultures in the western Plains

and Southwest based on changing tool technology, chronology, and the biggest game species thought to be typical of each period. From about 13,200 to 12,800 ya, hunters employed Clovistype fluted points (Fig. 13-14a; also see Fig. 13-10). On at least 20 western sites, including Colby, in Wyoming, and Naco

and Lehner, in southern Arizona, their spear tips lie embedded in mammoth remains (see Fig. 13-13). Similar points from northern Alaska retain residues that have been biochemically identified as mammoth red blood cells and hemoglobin crystals (Loy and Dixon, 1998). Meat—but not all of it from

mammoths—was part of the diet of these early Americans. Isotopic analysis of Buhl Woman’s (see p. 316) bone collagen indicates that her diet was largely game and fish, much of it probably preserved by sun-drying or smoking. The heavy wear on her teeth suggests that she regularly ingested grit in her food,

probably the residue of grinding stones used to pulverize the dried meat (Green et al., 1998).

Fluted projectile points and evidence of a varied Paleo-Indian diet are also found in eastern North America. While Pleistocene megafauna kill sites are known from the East, these huntergatherers also ate caribou, deer, and smaller game (Cannon and Meltzer, 2004). Early Paleo-Indian sites in New

Hampshire, Massachusetts, and New York appear to be the remains of caribouhunting camps (Cannon and Meltzer, 2004, p. 1971). A mixed subsistence of smaller game, fish, and gathered plants

supplied Paleo-Indians at a site in eastern Pennsylvania (McNett, 1985). And a Florida site yielded a bison skull that has what looks like part of a Paleo-Indian projectile point embedded in it. The age

of this specimen, however, is uncertain (Mihlbachler et al., 2000). Acquiring such resources surely involved the use of a variety of equipment made from perishable materials, such as nets and baskets, but only the stone spear tips survive at most Paleo-Indian sites.

Excavations along the lower Amazon in northern Brazil provide further evidence for other kinds of Paleo-Indian food-getting practices (Roosevelt et al., 1996). Carbonized seeds, nuts, and faunal remains at Pedra Pintada Cave, in the Amazon basin, indicate a broadbased food-collecting, fishing, and small game hunting way of life in the tropical rain forest around 12,000 ya. In other sites along the Pacific slope of the Andes, mastodons, horses, sloths, deer, camelids, and smaller animals were consumed, along with tuberous roots (Lynch, 1983).

During later Paleo-Indian times on the Great Plains of North America, the Clovis fluted spear points gave way to another fluted point style that archaeologists call Folsom (Meltzer, 2006; Fig.

13-14b). Smaller and thinner than Clovis points, but with a proportionally larger central flute, Folsom points are associated exclusively with the bones of the now-extinct giant long-horned bison.

In turn, Folsom points soon gave way to a long sequence of new point forms—a variety of unfluted but slender and finely parallel-flaked projectile points collectively called Plano (Fig. 13-14c), which came into general use throughout the West even as modern-day Bison bison was supplanting its larger Pleistocene relatives. At some Great Plains sites, like Olsen-Chubbuck and Jones-Miller, in Colorado, an effective technique of bison hunting was for hunters to stampede the animals into dry streambeds or over cliffs and then quickly dispatch those that survived the fall (Wheat, 1972; Frison, 1978). Remember, the horse

had not yet been reintroduced onto the Plains (the Spanish brought them in the sixteenth century), so these early bison hunters were strictly pedestrians. Again, there was regional variation, not only in how different groups of hunter-gatherers made a living in the diverse environments of North America, but also in their technology. The temporal pattern of changing point styles found in the western United States does not hold in the East, where later PaleoIndians employed other types of projectile points, such as the Dalton variety (Fig. 13-14d). Poor bone preservation generally leaves us with little direct information about these groups’ hunting techniques or favored prey in this region, though deer probably extended their range at the expense of caribou as oak forests expanded over much of the

eastern United States.

Pleistocene Extinctions

Evidence like that found mainly in the American West, where bones of mammoths and other large herbivores have been excavated in undeniable association with the weapons used to kill them, led some researchers to blame PaleoIndian hunters for the extinction of North American Pleistocene megafauna.

Geoscientist Paul S. Martin (1967, 2005), who viewed Clovis sites as evidence of the first people in North America, argued for decades that overhunting by the newly arrived and rapidly expanding human population caused the swift extermination, around 13,000 ya, of these animals throughout the New World. He pointed out that over half of the large mammal species found in the Americas when humans first arrived were gone within just a few centuries, especially those whose habits and habitats would have made them most vulnerable to hunters.

Paleo-Indian hunter-gatherers certainly hunted Pleistocene megafauna. As we noted earlier, archaeologistshave excavated several sites where such game was killed and butchered. But evidence that these animals were human prey doesn’t also prove that humans hunted them to extinction. The problem is complex for reasons that are still hotly contested (Haynes, 2009). First, it has yet to be proved that Paleo-Indian hunter-gatherers anywhere focused most of their food-getting effort on Pleistocene

megafauna (Meltzer, 1993a; Cannon and Meltzer, 2004). What we’re finding instead is that Paleo-Indian groups hunted and collected a range of animals and plants, the mix of which varied regionally (Walker and Driskell, 2007). Second, species extinction is a natural process, and it’s no less common than the emergence of new species. It happens for various reasons, and at least until modern times, these reasons seldom had anything to do with human agency. Yes, many North American megafaunal species went extinct toward the end of the Pleistocene. But if you look at the North American geological record, you’ll find that many species also became extinct in the Pliocene, Miocene, and so on. Our point is that the geological record provides abundant evidence that natural processes are sufficient to account for species extinctions. It has yet to be convincingly demonstrated that Paleo-Indian hunters were a more

important factor than natural processes in the extinction of Late Pleistocene megafauna.

One possible exception appears to be the extinction of proboscideans—mammoths, mastodons, elephants, and their relatives. Surovell and colleagues (2005) took a long-term view of the problem and examined the global archaeological record of human exploitation of proboscideans in 41 sites that span roughly the past 1.8 million years. They conclude that local extinctions of proboscideans on five continents correlate well with the global colonization patterns of humans, not climatic changes or other natural factors. This finding suggests that we can’t entirely dismiss the possibility that humans played a role in the Late Pleistocene extinctions of some species.

The end of the Pleistocene marked an interval of profound climatic and geographical changes (for example, the creation of the Great Lakes) in North America and elsewhere. Geoarchaeologists also recognize that Late Pleistocene extinctions and the expansion of Paleo-Indian hunters coincided with a time of widespread drought that was immediately followed by rapidly plunging temperatures. The latter

climatic event, called the Younger Dryas stadial, marked a return to near-glacial conditions and persisted for 1,500 years, from roughly 13,000 to 11,500 ya (Fiedel, 1999). These changing climatic conditions were apparently not necessarily catastrophic for Paleo-Indians in North America (Meltzer and Holliday, 2010).

For example, human populations in parts of the Southeast (Anderson et al., 2011) and Great Lakes region (Ellis et al., 2011) appear to have initially declined but later resurged during the Younger Dryas. As Meltzer and Holliday (2010, p. 32) explain it, “adapting to changing climatic and environmental conditions was nothing new to them [PaleoIndians]. It was what they did.” And in an extraordinary twist to the North American Younger Dryas story, Firestone and colleagues (2007) recently argued that the explosion of a meteor or other large extraterrestrial object over North America about 12,900 ya contributed directly to the Pleistocene megafaunal extinctions and the onset of the Younger Dryas and, ultimately, to the

end of the Paleo-Indian period! Their hypothesis has attracted considerable attention, mostly by the media; the scientific community has expressed considerable skepticism (Kerr, 2008).

The degree of human involvement in these New World extinctions will continue to be controversial. Grayson and Meltzer (2002, 2003, 2004) maintain that no archaeological evidence supports the idea that humans caused the mass extinction of Pleistocene megafauna in North America. They further argue that

Paul S. Martin’s original hypothesis has been altered into something that is no longer testable and that persists partly because it feeds contemporary political views concerning human effects on the environment. Others take issue with such views, both directly (e.g., Fiedel and Haynes, 2004) and indirectly

(Barnosky et al., 2004), and argue for a possible human role.

Early Holocene Hunter-Gatherers

Environmental effects at the end of the

last Ice Age extended well beyond the

waning glaciers. Much of the Northern

Hemisphere experienced radical environmental change during the transition from the Late Pleistocene epoch to

the mid-Holocene, which left only the

polar regions and Greenland with permanent ice caps. Climatic fluctuations,

the redistribution or even extinction

of many plant and animal species, and

the reshaping of coastlines as sea levels rose hundreds of feet from their Late

Pleistocene elevations all affected many

of the world’s human inhabitants. Their

technologies, homes, economic patterns,

and lives were transformed as they

adapted to the changing world in which

they lived.

Environmental Changes

Dramatically higher temperatures after

the end of the Younger Dryas—for

example, an increase of average July

temperature by perhaps 20°F— rapidly

melted the glaciers. As they receded

to higher latitudes, these great ice

sheets left behind thick mantles of silt,

mud, rocks, and boulders in till plains.

Sediment-choked rivers and streams

cut fresh channels and deposited new

terraces with the ebb and flow of meltwater runoff. Tons upon tons of fine

silt were lifted by winds across the

newly exposed plains and redeposited

as loess. In North America, the Great

Lakes gradually formed as the glaciers

retreated. Eventually, by 5,000 ya, sea

levels had risen as much as 400 feet in

some parts of the world, drowning the

broad Pleistocene coastal plains and

flooding into inlets to shape the continental margins we recognize today.

By then, overflow from the rising

Mediterranean had spilled into a lowlying basin to create the Black Sea, and

the North and Baltic seas finally separated the British Isles and Scandinavia

from the rest of Europe. To fully appreciate the archaeological significance of

these changes, consider the fact that

the rise in sea level at the end of the

Pleistocene cost Europe as much as 40

percent of its landmass (Bailey and King,

2010). Consequently, much that we have

yet to learn about Upper Paleolithic and

Archaic/Mesolithic coastal adaptations

lies buried under hundreds of feet of

seawater off modern coastlines!

As deglaciation proceeded, the major

biotic zones expanded northward, so

that areas once covered by ice were

clothed successively in tundra, grassland, fir and spruce forests, pine, and

then mixed deciduous forests (Delcourt

and Delcourt, 1991). Like the plants, temperate forest animals displaced their

Arctic counterparts. Grazers like the

musk ox and caribou, or reindeer, which

had ranged over the Late Pleistocene

tundra or grasslands, gave way to

browsing species, such as moose and

deer, which fed on leaves and the tender

twigs of forest plants. Meanwhile, the

annual mean summer temperatures continued to climb toward the local climatic

maximum, attained between 8,000 and

6,000 ya in many areas. By then, July

temperatures averaged as much as 5°F

higher than at present. Lakes that had

formed during the Ice Age as a result

of increased precipitation in nonglaciated areas of the American West, southwest Asia (the Near East), and Africa

now evaporated under more arid conditions, bringing great ecological changes

to those regions, too.

These geoclimatic transformations

most directly affected the temperate

latitudes, including northern and central Europe and the northern parts of

America. They were sufficient to alter

conditions of life for plants and animals

by creating new niches for some species

and pushing others toward extinction.

We can’t measure precisely how these

shifting natural conditions may have

affected human populations, although

they surely did.

Cultural Adjustments

Environmental readjustments are the

natural consequences of climatic change.

Although many of the changes that

happened at the end of the Pleistocene

were slow enough that they passed

largely unnoticed by individuals, much

depended on the terrain where the

changes occurred. For example, on the

low-lying coast of Denmark, where sea

level rose 1.5 to 2 inches per year during the early Holocene, “during his lifetime many a Stone Age man must have

seen his childhood home swallowed up

by the sea” (Fischer, 1995, p. 380). The

environmental impacts were cumulative,

and in time the redistribution of living

plant and animal species, plus variations

in local topography, drainage, and exposure, created a mosaic of new habitats,

some of which invited human exploitation and settlement.

Cultures in both hemispheres kept

pace with these changes by adjusting their ways of coping with local conditions. Distinctive climatic and cultural

circumstances prevailed in different

regions. Recognizing this variability

(and acknowledging regional differences

in the history of archaeological reconstructions of the past), archaeologists

have devised specific terms to designate the early and middle Holocene

cultures that turned to intensive hunting, fishing, and gathering lifeways in

response to post-Pleistocene conditions.

In the New World, the Archaic period

broadly applies to post-Pleistocene

hunter-gatherers. In Europe, this period

is called the Mesolithic. And in the Near

East and eastward into parts of Asia, the

Epipaleolithic period spans much the

same interval.

Holocene hunter-gatherers in temperate latitudes extracted their livelihood

from a range of local resources by hunting, fishing, and gathering. The relative

economic importance of each of these

subsistence activities varied from region

to region and even from season to season within a given area. In some places,

the focus on different food sources,

particularly more plants, fish, shellfish,

birds, and smaller mammals, corresponded to a lesser emphasis on hunting

big game. What accounts for this shift?

First, many former prey animals were

by then extinct or—like the reindeer—

locally unobtainable, having followed

their receding habitat north with the

waning ice sheets. Additionally, one way

to accommodate both the environmental

changes and human population growth

was to broaden the definition of food by

exploiting a wider range of potentially

edible species.

New habitats and prey species presented challenges and opportunities.

Among the important archaeological

reflections of cultural adjustments to

the changing natural and social environments of the postglacial world were

new tools and ways of making tools that

can be found in the sites of these periods. Important raw materials for tools

and other implements included stone,

bone, antler, and leather, as well as

bark and other plant materials (Clark,

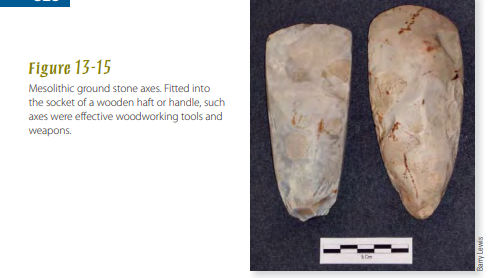

1967; Bordaz, 1970). With the spread of

temperate forests, ground stone axes,

adzes, and other tools became important items in tool kits (Fig. 13-15). Wood

tended to replace animal bones, tallow, and herbivore dung as the primary fuel and served well for house posts,

spear shafts, bowls, and countless smaller items. Wooden dugout canoes and

skin- covered boats aided in navigating streams and crossing larger bodies of water. Hunter-gatherers caught

large quantities of fish in nets, woven

basketry traps, and brushwood or stone

fish dams designed to block the mouths

of small tidal streams. With the aid of

axes and containers, the people extracted

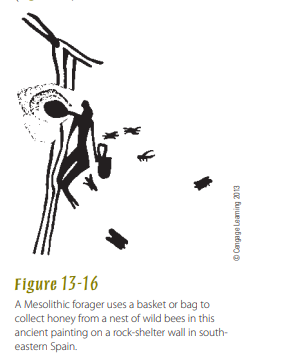

the honey of wild bees from hollow trees

Hunter-Gatherer Lifeways

Under the general category of hunting and gathering,

researchers recognize a range of subsistence strategies used by early Holocene

people as well as their more recent counterparts (Kelly, 1995).

These strategies often vary with the

relative mobility of such groups. At

one end of the spectrum are foragers,

who tend to live in small groups that

move camp frequently as valued food

resources come into season across their

home range. Viewed archaeologically,

forager campsites often show little

investment in substantial shelters, storage facilities, and other features that

reflect a long-term commitment to that

site. At the other end are collectors,

who are typically less mobile, staying in

some camps for long periods and drawing on a wide range of locally available plant and animal foods that they

bring back to camp for consumption.

Collector campsites tend to show evidence of long-term occupation, including middens, storage facilities, cemeteries, and mounds. As Conneller

(2004, p. 920) simply puts it, “foragers

can be characterised as people moving to resources, while collectors move

resources to people.” Most huntergatherers lived somewhere between

these extremes and emphasized

more of one or the other food-getting

approaches as the changing natural and

social conditions warranted.

Considering the environmental complexity of the temperate regions in the

Holocene, including a diverse array of

potentially exploitable plants and animals, the food-getting methods that

worked well in one region were not

always effective in another. Holocene

hunter-gatherers invented specialized

equipment to help them take advantage

of local resources, and we can reasonably assume that they approached cultural solutions to such problems with

detailed practical knowledge and understanding of the local environment and

its assets.

In foraging, anyone—young or old,

male or female—might contribute to the

general food supply by taking up whatever resources are at hand. But this isn’t

the same as saying that foragers eat

whatever comes to hand. Human foodgetting is selective, whether it’s based on

what you encounter in the forest that day

or find on sale in the local supermarket. Preferred foods are usually those

that are most readily available, easily

collected and processed, tasty, and nutritious. So, while the San people of the

Kalahari Desert in southwestern Africa

regard about 80 local plants as edible,

they rely mostly on about a dozen of

them as primary foods (A. Smith et al.,

2000). They use the rest of the foods less

often, but know they can eat them when

times are tough.

Particularly in regions with only

minor seasonal fluctuations in wild

food supplies, foraging held prospects

for good returns. Jochim (1976, 1998)

estimated that foraging activities could

maintain a stable population density of

about one person per 4 square miles in

some regions. More territory might be

required to sustain people in less favorable situations or where continuing environmental fluctuations influenced the

composition and predictability of animal

and plant communities or the stability of

estuaries and coastlines.

In areas where dramatic seasonal

variations in rainfall or temperature

affected resource availability, day-today foraging might not always yield a

stable diet. Human population density

and equilibrium could be maintained

only if the group adopted an alternative

strategy. Familiarity with their environment enabled people to predict when

specific resources should reach peak

productivity or desirability. By making well-informed decisions, hunters

and gatherers scheduled their movements so they would arrive on the scene

at the best season for obtaining a particularly important food. Seasonality and

resource scheduling are familiar issues

for most, if not all, foragers.

Compared with foragers, food collectors relied much more on a few seasonally abundant resources, and their

camps often show evidence of specialized processing and storage technologies

that allowed them to balance out fluctuations and remain longer in one place. For

example, migratory fish might be split

and cured; and nuts or seeds could be

parched and stored away in baskets or

bags until needed.

Case Studies of Early Holocene Cultures

Now that we’ve looked at some general

hunting and gathering strategies, we

can get down to specifics. In this section, we’ll consider how the foragers and

collectors from various regions found

food and adjusted to changes in their

environment.

Archaic Hunter-Gatherers of North America

With the retreat of the North American

glaciers, the environments, plants, animals, and people of the temperate and

boreal latitudes changed significantly.

Archaic hunter-gatherers exploited new

options in their much-altered environments, which no longer included megafauna (see Fig. 13-9). In eastern North

America, dense forests of edible nutbearing trees spread across the midcontinent and offered rich resources

that attracted humans and other animals. Along the coasts, rising sea levels

submerged tens of thousands of square

miles of low-lying coastlines, creating

rich new estuarine and marine environments that Archaic people exploited

with a broad array of gear, including fishing equipment, dugout boats,

traps, weirs, and nets. The main killing

weapon used by these hunter- gatherers

was the spear and spear-thrower, or

atlatl (see p. 298); the bow and arrow

came much later, dating to around

1,800–1,500 ya in much of the continental United States.

In many parts of North America,

hunter-gatherer lifeways were more the

collector than the forager type common

among their Paleo-Indian ancestors.

These collectors scheduled subsistence

activities to coincide with the annual

availability of particularly productive

resources at specific locations within

their territories. They lived in smaller

and more circumscribed territories and

acquired, through exchange with neighbors or more distant groups, whatever

might be lacking locally. Especially in

temperate regions, efficient exploitation of edible nuts, deer, fish, shellfish,

and other forest and riverine products

was enhanced by new tools, storage

techniques, and regional exchange networks. The density of sites and their

average size and permanence increased

in many localities. Toward the end of

the Archaic period, the archaeological

remains of some sites exhibit signs of

more complex sociopolitical organization, religious ceremonialism, and economic interdependence than had ever

existed before.

Archaic Cultures of Western North

America Rock-shelters in the Great Basin, a harsh arid expanse between the Rocky Mountains and the Sierra Nevadas, preserve material evidence of Desert Archaic lifeways (D’Azevedo, 1986). Hunting weapons, milling stones, twined and coiled basketry, nets, mats, feather robe fragments, fiber sandals and hide moccasins, bone tools, and even gaming pieces are sealed in deeply

stratified sites, such as Gatecliff Shelter (Thomas et al., 1983) and Lovelock Cave in Nevada and Danger Cave in Utah, and illuminate nearly 10 millennia of hunting and gathering. Coprolites occasionally found in these deposits contain seeds, insect exoskeletons, and often the tiny scales and bones of fish, rodents, and amphibians, all of which give important information about diet and health (Reinhard and Bryant, 1992). Freshwater and brackish marshes were focal points for many subsistence activities—sources of fish, migratory fowl, plant foods, and raw materials during half the year—but upland resources such as pine nuts and game were important, too. Larger animals might be taken occasionally, though smaller prey, such as jackrabbits and ducks, and the seasonal medley of seed-bearing plants afforded these foragers their most reliable diet. Success in this environment was a direct measure of cultural flexibility (Fig. 13-17).

Prehistoric societies throughout California’s varied environments likewise sustained themselves without agriculture (McBrinn, 2010). Rich oak forests fed much of the region’s human and animal population. Hunter-gatherers routinely ranged across several productive resource zones, from seacoast to interior valleys. Typical California societies, such as the Chumash of the Santa Barbara coast and Channel Islands, obtained substantial harvests of acorns and deer in the fall, supplemented by migratory fish, small game, and plants throughout the year (Glassow, 1996). Collected wild resources sustained permanent villages of up to 1,000 inhabitants. The Chumash were the latest descendants of a long sequence of southern California hunter-gatherers that archaeologists have traced back more than 8,000 years (Moratto, 1984). As environmental fluctuations

and population changes necessitated adjustments in coastal and terrestrial resources, Archaic Californians at times ate more fish and shellfish, then more sea mammals or deer, and later more acorns and smaller animal species.

Archaeological and cultural anthropological studies along the northwestern coast of the United States and Canada have identified other impressive nonfarming societies whose economies also centered on collecting rich and diverse sea and forest resources. Inhabitants of this region caught, dried, and stored salmon as the fish passed upriver from the sea to spawn each spring or fall.

Berries and wild game such as bear and deer were locally plentiful; oily candlefish, halibut, and whales could be captured with the aid of nets, traps, large seaworthy canoes, and other wellcrafted gear. Excavations at Ozette, on Washington’s Olympic Peninsula, revealed a prosperous Nootka whaling community buried in a mud slide 250 years ago (Samuels, 1991).

By historical times along the northwestern coast, clan-based lineages resided in permanent coastal communities of sturdy plank-built cedar houses, guarded by carved cedar totem poles proclaiming their owners’ genealogical heritage. They vied with one another for social status by staging elaborate public functions (now generally known as the potlatch) in which quantities of smoked salmon, fish or whale oil, dried berries, cedar-bark blankets, and other valuables were bestowed upon guests (Jonaitas, 1988). There are archaeological signs that these practices may be quite ancient, with substantial houses, status artifacts, and evidence of warfare dating back some 2,500 years (Ames and Maschner, 1999). While a successful potlatch earned prestige for the hosts and incurred obligations to be repaid in the future, it also served larger purposes. By fostering a

network of mutual reciprocity that created both sociopolitical and economic alliances, this ritualized redistribution system ensured a wider availability of the region’s dispersed resources. As a result, highly organized sedentary communities in the Pacific Northwest prospered without relying on domesticated crops.

Farther to the north, western Arctic Archaic bands pursued coastal sea mammals or combined inland caribou hunting with fishing; however, their diet included virtually no plant foods (McGhee, 1996). Beginning about 2,500 ya, Thule (Inuit/

Eskimo) hunters expanded eastward across the Arctic with the aid of a highly specialized tool kit that included effective toggling harpoons for securing sea mammals; blubber lamps for light, cooking, and warmth; and sledges and kayaks

(skin boats) for transportation on frozen land or sea.

Archaic Cultures of Eastern North

America Locally varied environments across eastern North America supported a range of Archaic cultures after about 10,000 ya. A general warming and drying trend lasting several thousand years promoted deciduous forest growth as far north as the Great Lakes. Archaic societies exploited the temperate oak-hickory forests of the Midwest and the oak-chestnut forests and rivers of the Northeast and Appalachians.

Nuts of many kinds were an important staple for these forest groups. Acorn, chestnut, black walnut, butternut, hickory, and beechnut represent plentiful foods that are both nutritious and palatable, rich in fats and oils, and above all easily stored. Some nuts were prepared by parching or roasting, others by crushing and boiling into soups; leaching in hot water neutralized the toxic tannic acids found in acorns. Whitetail deer and black bear provided meat, hides for clothing, and bone and antler for toolmaking. Other important food items included migratory fowl, wild turkey, fish, turtles, and small mammals such as raccoons, as well as berries and seeds.

In New England, some coastal Archaic groups from Massachusetts to Labrador used canoes to hunt sea mammals and swordfish with bone-bladed harpoons in summer, then relied on caribou and salmon the rest of the year (Snow, 1980). North of the St. Lawrence River, other Archaic bands dispersed widely through the sparse boreal forests, hunting caribou or moose, fishing, and trapping.

In the Midwest around the Great Lakes, seasonally mobile collectors employed an extensive array of equipment to fish, hunt, and gather. The productive valleys of the midcontinent, where several great rivers flow into the Mississippi, supported a riverine focus. Favored sites in this region attained substantial size and were occupied for many generations, some—such

as Eva and Rose Island, in Tennessee, and Koster, in southern Illinois—for thousands of years. Trade networks moved valued raw materials and finished goods hundreds, and in some cases thousands, of miles; tools of soft native copper from northern Minnesota and Wisconsin have been found in Archaic sites as far away as Georgia and Mississippi, and marine shells from

the Gulf Coast have been uncovered in western Great Lakes sites.

Eastern Archaic groups at times reinforced their claims to homelands by laying out cemeteries for their dead or by erecting earthwork mounds. These activities imply an emerging social differentiation within some of the preagricultural Archaic societies as well as a degree of sedentism. Archaeologists studying more than 1,000 Archaic burials at Indian Knoll, in Kentucky (Webb, 1974), found possible status indicators reflected in the distribution of grave goods. Though two-thirds of the graves contained no offerings at all, certain females and children had been given disproportionate shares; only a few males were buried with tools and weapons (Rothschild, 1979).

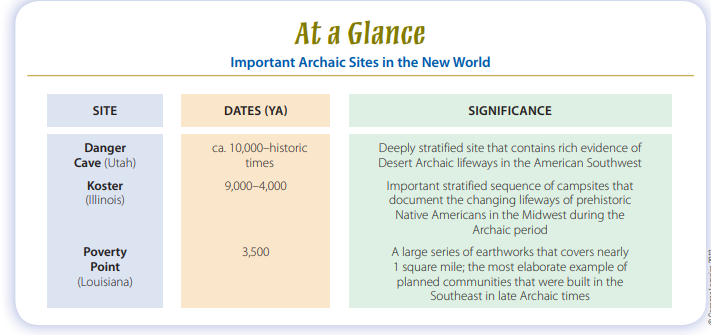

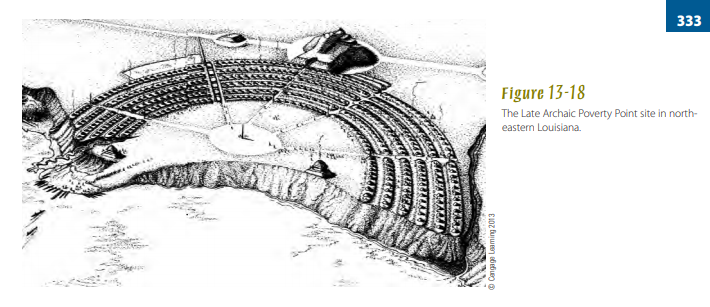

Archaic people in the Lower Mississippi Valley began raising monumental earthworks beginning as early as 5,500 ya. Mound building required communal effort and planning. Near Monroe, Louisiana, Watson Brake is a roughly oval embankment enclosing a space averaging 750 feet across and capped by about a dozen individual mounds up to 24 feet in height (Saunders, 2010). Not far to the east, Poverty Point’s elaborate 3,500-year-old complex of six concentric semicircular ridges is flanked by a large platform mound on its west side (Fig. 13-18), plus several nearby mounds, and covers a full square mile (Kidder et al., 2008). That hunter-gatherers chose to invest their energies in creating these planned earthworks with public spaces and dozens of other impressive structures suggests highly developed Archaic social organization and ritualism, though their precise meaning remains unclear.

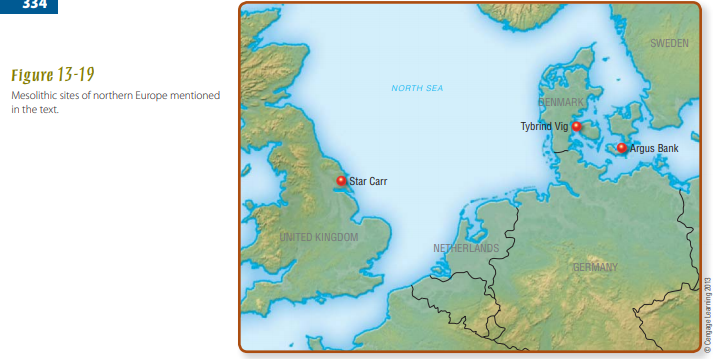

Mesolithic of Northern Europe

As in North America, people colonized Europe’s northern reaches as the glacial ice retreated (Jochim, 1998). Rising waters began to reclaim low-lying coastlines, flooding the North and Baltic seas and burying Paleolithic and Mesolithic sites in the process (Fischer, 1995). On land, temperate plant and animal species succeeded their Ice Age counterparts. Across northwestern Europe, as grasses and then forests invaded the open landscape, red deer, elk, and aurochs replaced the reindeer, horse, and bison of Pleistocene times. Human hunters, armed with efficient weapons, accommodated themselves to the relatively low carrying capacity of the northern regions (where plant foods, at least, were seasonally scarce) by eating more meat and fish.