Lunchtime on a late summer day 20,000 years ago

in the southwestern part of what is now France: A

small group of boys have been playing since midmorning, exploring the caves that are common in

their region, looking for old stone tools that have

been left behind by hunting parties. They are starting to get

hungry. They do not head back to their village for food: The

morning and evening meals will be provided by their parents and other adults in the tribe, but they are on their own

between those two meals.

At this time of year, the boys do not mind foraging on

their own. The summer has been rainy and warm, and a

large variety of nuts, berries, and seeds are beginning to

ripen. Because the summer growing season has been a

good one, small game such as rabbits and squirrels are well

fed and will make a good meal if the boys can manage to

catch one. They spend an hour or two moving from site

to site where food can be found, covering a couple of miles

in the process. They see a rabbit and spend 20 minutes

very quietly trying to sneak up on it before realizing that it

is no longer in the area. Even without the rabbit, they are

all happy with the amount of food they managed to find

during their midday forage. In mid-afternoon, they stop by a

stream for a rest, and then one by one they fall asleep.

Lunchtime on a late summer day in the early twentyfirst century, at a middle school in the United States:

A large group of children line up in the cafeteria to get their

lunch. They have spent the morning behind desks, doing

their school work. They have had one short recess, but

they will not have another during the afternoon. They have

a physical education class only once a week because budget cutbacks have meant that their school can afford only

one gym teacher for more than 1,200 students.

As the children pass through the cafeteria line, most of

them ignore the fruit, vegetables, and whole-wheat breads.

Instead, they choose foods high in fat, salt, and sugar:

chicken nuggets, fries, and cake. The children do not drink

the low-fat milk provided but instead favor sweet sodas

and fruit-flavored drinks. After they sit down, the children

have 15 minutes to finish their meals. Most of them would

say that they really like the food the cafeteria gives them.

When they are finished, they return to their classrooms for

more instruction.

At first glance, children in developed countries in the early twenty-first century are much

healthier than their counterparts who lived 20,000 years ago. They are bigger and more

physically mature for their age, and unlike their Paleolithic ancestors, they can reasonably

expect to live well into their 70s. They have been vaccinated against several potentially lifethreatening viral illnesses, and they need not worry that a small cut, a minor broken bone,

or a toothache will turn into a fatal bacterial infection. They are blissfully free of parasites.

On the other hand, a child from 20,000 years ago might have grown up more slowly

than a contemporary child, but upon reaching adulthood he would have had a strong,

lean body, with much more muscle than fat. He would not have spent a lifetime consuming more calories than he expended. If he were lucky enough to avoid infectious disease,

injury, and famine, in his middle and old age he would have been less likely to suffer from heart disease, high blood pressure, diabetes, and even some kinds of cancer than

would an adult living today.

Health and illness are fundamental parts of the human experience. The individual experience of illness is produced by many factors. Illness is a product of our

genes and culture, our environment and evolution, the economic and educational

systems we live under, and the things we eat. When we compare how people live now

to how they lived 20,000 years ago, it is apparent that it is difficult to define a healthful environment. Is it the quantity of life (years lived) or the quality that matters

most? Are we healthier living as our ancestors did, even though we cannot re-create

those past environments, or should we rejoice in the abundance and comfort that a

steady food supply and modern technology provide us?

In this chapter, we will look at many aspects of human health from both biocultural and evolutionary perspectives, and we will see how what we can learn from the

skeleton about life, health, and disease is used by forensic anthropologists in recent

criminal cases. We will see how health relates to growth, development, and aging.

We will consider infectious disease and the problems associated with evolving biological solutions to infectious agents that can also evolve. We look at the interaction

between diet and disease and the enormous changes in our diet since the advent of

modern agriculture. Finally, we will see how the skeleton retains clues about our lives

and deaths that can be used to solve criminal cases.

Biomedical Anthropology and Biocultural Perspective

Biomedical anthropology is the subfield of biological anthropology concerned with

issues of health and illness. Biomedical anthropologists bring the traditional interests

of biological anthropology—evolution, human variation, genetics—to the study of

medically related phenomena. Like medicine, biomedical anthropology is a biological

science, which relies on empiricism and hypothesis testing and, when possible, experimental research to further the understanding of human disease and illness. Biomedical anthropology is also like cultural medical anthropology in its comparative outlook,

and its attempt to understand illness in the context of specific cultural environments.

A central concept of biomedical anthropology is adaptation. As we have discussed in previous chapters, an adaptation is a feature or behavior that serves over

the long term to enhance fitness in an evolutionary sense. But we can also look at

adaptation in the short term; this is known as adaptability (Chapter 6). A basic question biomedical anthropologists try to answer is: To what extent is adaptability itself

an adaptation? For example, the life history stages that all people go through have

been shaped by natural selection, but our biology must be flexible enough to cope

with the different environmental challenges we will face over a lifetime.

In addition to an adaptation-based evolutionary approach, many biomedical anthropologists look at health from a biocultural perspective. The biocultural approach

recognizes that when we are looking at something as complex as human illness, both

biological and cultural variables offer important insights. The biocultural view recognizes that human behavior is shaped by both our evolutionary and our cultural

histories and that, just as human biology does, our behavior influences the expression of disease at both the individual and population levels (Wiley 2004; Wiley and

Allen, 2009).

An example of an illness that can be understood only in light of both biology and

culture is anorexia nervosa, a kind of self-starvation in which a person fails to maintain

a minimal normal body weight, is intensely afraid of gaining weight, and exhibits disturbances in the perception of his or her body shape or size (Figure 15.1) (American

Psychiatric Association, 1994). The anorexic person fights weight gain by not eating, purging (vomiting) after eating, or exercising excessively. The prevalence rate

for anorexia is about 0.5%–1.0% among teenaged and young women; about 90% of

all sufferers are female. Anorexia is a serious illness with both long- and short-term

increases in mortality. For example, at 6–12 years’ follow-up, the mortality rate is

9.6 times the expected rate (Nielsen, 2001).

The thin ideal of female attractiveness often is thought to be a cultural stress

leading to the development of anorexia. Studies conducted among teenage girls in

Fiji have shown that the introduction of television (with Western programming) in

1995 lead to an increased concern with maintaining a thin body and increased rates

of dieting and purging (Becker et al., 2007). Anorexia in some non-Western cultures

takes a somewhat different form, however. Anorexia patients in Hong Kong do not

have the “fat phobia” we associate with Western anorexia, but rather exhibit a generalized avoidance of eating (Katzman and Lee, 1997). This indicates that even though

anorexia is not limited to Western cultures, the focus on fat (rather than a more generalized obsession with self-control) is shaped by the Western cultural concerns with

obesity, thinness, and weight loss.

Most young women maintain their body weight without starving themselves,

habitually purging, or even dieting. In a 1-year longitudinal study of the eating

and dieting habits of 231 American adolescent girls, medical anthropologist Mimi

Nichter and colleagues (1995) showed that most of the subjects maintained their

weight by watching what they eat and trying to follow a healthful lifestyle rather than

taking more extreme measures. Anthropological studies such as this are important

because clinicians are not as interested in what the healthy population is doing, and

they help to provide a biocultural context for the expression of disease.

Birth , Growth and Aging

All animals go through the processes of birth, growth, and aging. Normal growth

and development are not medical problems per se, but the process of growth is

a sensitive overall indicator of health status (Tanner, 1990). Therefore, studies of

growth and development in children provide useful insights into the nutritional or

environmental health of populations.

Human Childbirth

Nothing should be more natural than giving birth. After all, the survival of the species

depends on it. However, in industrialized societies birth usually occurs in hospitals. Of

the more than 4 million births in the United States in 2000, more than 90% occurred in

hospitals; in 2007, 31.8% of all American births were Cesarean deliveries (Martin et al.,

2010). This rate is not extraordinary among developed countries: it is somewhat higher

than those seen in Europe, but lower than rates in many parts of China and Latin

America (Betrán et al., 2007). In 1900, only 5% of U.S. births occurred in a hospital

(Wertz & Wertz, 1989). At that time, given the high risk of contracting an untreatable

infection, hospitals were seen as potentially dangerous places to give birth.

Human females are not that much larger than chimpanzee females, yet they give

birth to infants whose brains are nearly as large as the brain of an adult chimpanzee

and whose heads are very large compared with the

size of the mother’s pelvis. The easiest evolutionary solution to this problem would be for women

to have evolved larger pelves, but too large a pelvis

would reduce bipedal efficiency. Wenda Trevathan

(1999) points out that the shape as well as the size

of the pelvis is a critical factor in the delivery of a

child. Not only is there a tight fit between the size

of the newborn’s head and the mother’s pelvis, but

the baby’s head and body must rotate or twist as

they pass through the birth canal, which is a process that introduces other dangers (such as the

umbilical cord wrapping around the baby’s neck).

In contrast to humans, birth is easy in the great

apes. Their pelves are substantially larger relative

to neonatal brain size, and the shape of their quadrupedal pelves allows a more direct passage of the

newborn through the birth canal (Figure 15.2).

In traditional cultures, women usually give

birth with assistance from a midwife (almost

always a woman). Trevathan observes that although women vary across cultures in their

reactions to the onset of labor, in almost all

cases, the reaction is emotion-charged and

results in the mother seeking assistance from

others. She hypothesizes that this behavior

is a biocultural adaptation. A human birth is

much more likely to be successful if someone

is present to assist the mother in delivery. Part

of the assistance is in actually supporting the

newborn through multiple contractions as it

passes through the birth canal, but much recent research has shown that the emotional

support of mothers provided by birth assistants

is also of critical importance (Klaus & Kennell,

1997). Such emotional support often is lacking

in contemporary hospital deliveries, although

there has been some effort in recent years to

remedy this situation (Figure 15.3). Recent research has shown that birth for large-brained

Neandertal babies was just as difficult as for

modern humans (Ponce de León et al., 2008). It is interesting to consider the

possibility that Neandertal mothers may have also received support from kin and

others during birth.

Patterns of Human Growth

The study of human growth and development is known as auxology. All animals go

through stages of growth that are under some degree of genetic control. However,

the processes of growth and development can be acutely sensitive to environmental conditions. Thus, patterns of growth that emerge under different environmental

conditions can provide us with clear examples

of biological plasticity (Mascie-Taylor and Bogin,

1995) (Chapter 6).

We chart growth and development using

several different measures including height,

weight, and head circumference. Cognitive

skills, such as those governing the development of language, also appear in a typical

sequence as the child matures. We can also

assess age by looking at dentition or sexual

reproductive capacity. Different parts of the

body mature at different rates (Figure 15.4).

For example, a nearly adult brain size is

achieved very early, whereas physical and

reproductive maturation all come later in

childhood and adolescence.

Stages of Human Growth

In the 1960s, Adolph Schultz (1969) proposed a

model of growth in primates that incorporated

four stages shared by all primates. This model is

presented in Figure 15.5. In general, as life span

increases across primate species, each stage of

growth increases in length as well.

The Prenatal or Gestational Stage The first stage of growth is the prenatal or gestational stage. This begins with conception and ends with the birth of

the newborn. As indicatedin Figure 15.5, gestational length increases across

primates with increasing life span but is not simply a function of larger body

size. Gibbons have a 30-week gestation, compared with the approximately

25-week gestation of baboons, even though gibbons are much smaller. Growth during the prenatal period is extraordinarily rapid. In humans, during the embryonic

stage (first 8 weeks after conception), the fertilized ovum (0.005 mg) increases in

size 275,000 times. During the remainder of the pregnancy (the fetal period), growth

continues at a rate of about 90 times the initial weight (the weight at the end of the

embryonic stage) per week, to reach a normal birth weight of about 3,200 g.

Although protected by the mother both physically and by her immune system,

the developing embryo and fetus are highly susceptible to the effects of some

substances in their environment. Substances that cause birth defects or abnormal

development of the fetus are known as teratogens. The most common human

teratogen is alcohol. Fetal alcohol syndrome (FAS) is a condition seen in children that

results from “excessive” drinking of alcohol by the mother during pregnancy. At this

point, it is not exactly clear what the threshold for excessive drinking is or whether

binge drinking or a prolonged low level of drinking is worse for the fetus (Thackray

and Tifft, 2001). Nonetheless, it is clear that heavy maternal drinking can lead to

the development of characteristic facial abnormalities and behavioral problems in

children. It is estimated that between 0.5 and 5 in 1,000 children have some form

of alcohol-related birth defect. Although they are not teratogens, other substances

in the environment may affect the developing fetus. Pollutants such as lead and

polychlorinated biphenyls may cause low birth weight and other abnormalities.

Infancy, Juvenile Stage, Adolescence, and Adulthood Schultz defined the

three stages of growth following birth—infancy, juvenile stage, and adulthood—

with reference to the appearance of permanent teeth. Infancy lasts from birth until

the appearance of the first permanent tooth. In humans, this tooth usually is the

lower first molar, and it appears at 5–6 years of age. The juvenile stage begins at this

point and lasts until the eruption of the last permanent tooth, the third premolar,

which can occur anywhere between 15 and 25 years of age. Adulthood follows the

appearance of the last permanent tooth.

Tooth eruption patterns provide useful landmarks for looking at stages of growth

across different species of primates, but they do not tell the whole story. Besides

length of stages, there is much variation in the patterns of growth and development

in primate species. Barry Bogin (1999) suggests that the four-stage model of primate

growth is too simple and does not reflect patterns of growth that may be unique to

humans. In particular, he argues that during adolescence, humans have a growth spurt

that reflects a species-specific adaptation.

Bogin places the end of the juvenile period, and the beginning of adolescence,

at the onset of puberty. The word puberty literally refers to the appearance of pubic

hair, but as a marker of growth it refers more comprehensively to the period during

which there is rapid growth and maturation of the body (Tanner, 1990). The age

at which puberty occurs is tremendously variable both within and between populations, and even within an individual, different

parts of the body may mature at different rates

and times. Puberty tends to occur earlier in girls

than boys. In industrialized societies, almost all

children go through puberty between the ages

of 10 and 14 years (Figure 15.6).

During adolescence, maturation of the primary and secondary sexual characteristics continues. In addition, there is an adolescent growth

spurt. According to Bogin (1993, 1999), the

expanding database on primate maturation

patterns indicates that the adolescent growth

spurt—and therefore adolescence—is most pronounced in humans. Why do we need adolescence? The length of the juvenile stage, most of

which occurs after brain size has reached adult

proportions, varies widely among mammal species. There is a cost to a prolonged juvenile stage

because it delays the onset of full sexual maturity and the ability to reproduce. But the juvenile stage is also necessary as a training period

during which younger animals can learn their

adult roles and the social behaviors necessary to survive and reproduce within their own species. The evolutionary costs of delaying

maturation are offset by the benefits of social life. Among mammals, the juvenile

stage is longest in highly social animals, such as wolves and primates. Humans are

the ultimate social animal. Bogin argues that the complex social and cultural life of

humans, mediated by language, requires an extended period of social learning and

development: adolescence.

The Secular Trend in Growth

One of the most striking changes in patterns of growth identified by auxologists is

the secular trend in growth. By using data collected as long ago as the eighteenth century, they demonstrated that in industrialized countries, children have been growing larger and maturing more rapidly with each passing decade, starting in the late

nineteenth century in Europe and North America (Figure 15.7). The secular trend

started in Japan after World War II, and it is just being initiated now in parts of the

developing world. In Europe and North America, since 1900, children at 5 to 7 years

of age averaged an increase in stature of 1 to 2 cm per decade (Tanner, 1990). In

Japan between 1950 and 1970, the increase was 3 cm per decade in 7-year-olds and

5 cm per decade in 12-year-olds. A more recent secular trend in growth has been seen

in South Korea, where surveys of children conducted between 1965 and 2005 show

a continuing increase in both height and weight (Kim et al., 2008). Twenty-year-old

Korean men were 5.3 cm taller and 12.8 kg heavier than their 1965 counterparts;

women were 5.4 cm taller and 4.1 kg heavier. The onset of puberty was clearly earlier

in the 2005 group, since the greatest differences from the 1965 group were seen in

the 10–15-year-old age groups.

The secular trend in growth undoubtedly is a result of better nutrition (more

calories and protein in the diet) and a reduction in the impact of diseases during

infancy and childhood. We find evidence for this over the short term from migration

studies, which have shown that changes in the environment (from a less healthful

to a more healthful environment) can lead to the development of a secular trend

in growth. Migration studies look at a cohort of the children of migrants born and

raised in their new country and compare their growth with either their parents’

growth (if the children have reached adulthood) or that of a cohort of children in

the country from which they immigrated. Migration studies of Mayan refugees from Guatemala to the United States show evidence of a secular trend in growth (Bogin,

1995). Mayan children raised in California and Florida were on average 5.5 cm taller

and 4.7 kg heavier than their counterparts in Guatemala. Although the secular trend

in growth appears to highlight a straightforward relationship between increased stature and industrialization, the stature each individual achieves is the result of the

complex interaction of genetics, economic status, and nutrition.

Menarche and Menopause

Another hallmark of the secular trend in growth is a decrease in the age of

menarche—a girl’s first menstrual period—seen throughout the industrialized

world. From the 1850s until the 1970s, the average age of menarche in European and

North American populations dropped from around 16 to 17 years to 12 to 13 years

(Figure 15.8) (Tanner, 1990; Coleman & Coleman, 2002). A comprehensive study of

U.S. girls (sample size of 17,077) found that the age of menarche was 12.9 years for

white girls and 12.2 years for black girls (Herman-Giddens et al., 1997). This does

not reflect a substantial drop in age of menarche since the 1960s.

In cultures undergoing rapid modernization, changes in the age of menarche

have been measured over short periods of time. Among the Bundi of highland

Papua New Guinea, age of menarche dropped from 18.0 years in the mid-1960s to

15.8 years for urban Bundi girls in the mid-1980s (Worthman, 1999). Over the long

term, the rate of decrease in age of menarche in most of the population was in the

range 0.3 to 0.6 years per decade. For urban Bundi girls, the rate is 1.29 years per

decade, which may be a measure of the rapid pace of modernization in their society.

Menarche marks the beginning of the reproductive life of women, whereas

menopause marks its end. Menopause is the irreversible cessation of fertility that

occurs in all women before the rest of the body shows other signs of advanced aging

(Peccei, 2001a). Returning to Figure 15.5 on page 391, note that of all the primate

species illustrated, only in humans does a significant part of the life span extend

beyond the female reproductive years. In fact, as far as we know, humans are unique

in having menopause (with the exception of a species of pilot whale). Menopause

has occurred in the human species for as long as recorded history (it is mentioned

in the Bible), and there is no reason to doubt that it has characterized older human

females since the dawn of Homo sapiens. Although highly variable, menopause usually

occurs around the age of 50 years.

At first glance, menopause looks to be a

well-defined, programmed life–history stage.

Why does it occur? Jocelyn Peccei (1995)

suggests a combination of factors, including

adaptation, physiological tradeoff, and an artifact of the extended human life span. Some

adaptive models focus on the potential fitness benefits of having older women around

to help their daughters raise their children,

termed the grandmothering hypothesis (Hill &

Hurtado, 1991). Kristen Hawkes (2003) proposes that menopause is the most prominent

aspect of a unique human pattern of longevity and that this pattern has been shaped

largely by the inclusive fitness benefits derived by postmenopausal grandmothers who

contribute to the care of their grandchildren.

There is some empirical support for this idea.

For example, a study of Finnish and Canadian

historical records indicates that women who

had long postreproductive lives had greater

lifetime reproductive success (Lahdenpera

et al., 2004).

Peccei suggests that an alternative to the

grandmothering hypothesis may be more plausible: the mothering hypothesis. She argues that

the postreproductive life span of women allows

them to devote greater resources to the (slowly

maturing) children they already have and that

this factor alone could account for the evolution

of menopause. This hypothesis is supported by

population data from Costa Rica covering maternal lineages dating from the 1500s until the 1900s

(Madrigal and Meléndez-Obando, 2008). These

data showed that the longer a mother lived, the

higher her fitness; however, there was a negative

effect on her daughter’s fitness. Thus there was

support for the mothering hypothesis but not

the grandmothering hypothesis. Clearly, more

research needs to be done in this area. The relationship between maternal longevity and reproductive fitness is complex, and we will need data

from many populations before there is a general

perspective on that relationship in the human

species as a whole.

Aging

Compared with almost all other animal species, humans live a long time, at least as

measured by maximum life span potential (approximately 120 years). But the human

body begins to age, or to undergo senescence, starting at a much younger age. Many

bodily processes actually start to decline in function starting at age 20, although the

decline becomes much steeper starting between the ages of 40 and 50 (Figure 15.9).

The physical and mental changes associated with aging are numerous and well

known, either directly or indirectly, to most of us (Schulz & Salthouse, 1999).

Why do we age? We can answer from both the physiological and the evolutionary standpoints (Figure 15.10). From a physiological perspective, several hypotheses or models of aging have been offered (Nesse & Williams, 1994; Schulz & Salthouse,

1999). Some have focused on DNA, with the idea that over the lifetime, the accumulated damage to DNA, in the form of mutations caused by radiation and other

forces, leads to poor cell function and ultimately cell death. Higher levels of DNA repair enzymes are found in longer-lived species, so there may be some validity to this

hypothesis, although in general the DNA molecule is quite stable. Another model

of aging focuses on the damage that free radicals can do to the tissues of the body

(Finkel and Holbrook, 2000). Free radicals are molecules that contain at least one

unpaired electron. They can link to other molecules in tissues and thereby cause

damage to those tissues. Oxygen-free radicals, which result from the process of oxidation (as the body converts oxygen into energy), are thought to be the main culprit

for causing the bodily changes in aging. Antioxidants, such as vitamins C and E,

may reduce the effects of free radicals, although it is not clear yet whether they slow

the aging process. Further evidence for the free radical theory of aging comes from

diseases in which the production of the body’s own antioxidants is severely limited.

These diseases seem to mimic or accelerate the aging process.

In wild populations, aging is not a major contributor to mortality: Most animals

die of something besides old age, as humans did before the modern age. Thus, aging

per se could not have been an adaptation in the past because it occurred so rarely in

the natural world (Kirkwood, 2002). Two nonadaptive evolutionary models of aging

are the disposable soma hypothesis (Kirkwood and Austad, 2000) and the pleiotropic gene

hypothesis (Williams, 1957; Nesse and Williams, 1994). Both take the position that old

organisms are not as evolutionarily important as young organisms. The disposable

soma hypothesis posits that it is more efficient for an organism to devote resources

to reproduction rather than maintenance of a body. After all, even a body in perfect shape can still be killed by an accident, predator, or disease. Therefore, organisms are better off devoting resources to getting their genes into the next generation

rather than fighting the physiological tide of aging.

The pleiotropic gene hypothesis has a similar logic, although it comes at the problem from a different angle. As you recall (Chapter 4), pleiotropy refers to the fact that

most genes have multiple phenotypic effects. For all organisms, the effects of natural

selection are more pronounced based on the phenotypic effects of the genes during

the earliest rather than later phases of reproductive life. The simple reason for this is

that a much higher proportion of organisms live long enough to reach the early reproductive phase than the proportion who make it until the late reproductive phase.

For example, imagine that a gene for calcium metabolism helps a younger animal

heal more quickly from wounds and thus increase its fertility (Nesse and Williams,

1994). A pleiotropic effect of that same gene in an older animal might be the development of calcium deposits and heart disease; this “aged” effect has little influence on

the lifetime fitness of the animal. Aging itself may be caused by the cumulative actions

of pleiotropic genes that were selected for their phenotypic effects in younger bodies

but have negative effects as the body ages.

Infectious Diseases and Biocultural Evolution

Our bodies provide the living and reproductive environment for a wide variety of

viruses, bacteria, single-celled eukaryotic parasites, and more biologically complex

parasites, such as worms. As we evolve defenses to combat these disease-causing organisms, they in turn are evolving ways to get around our defenses. Understanding

the nature of this “arms race” and the environments in which it is played out may be

critical to developing more effective treatments in the future.

Infectious diseases are those in which a biological agent, or pathogen, parasitizes or infects a host. Human health is affected by a vast array of pathogens. These

pathogens usually are classified taxonomically (such as bacteria or viruses), by their

mode of transmission (such as sexually transmitted, airborne, or waterborne), or by the

organ systems they affect (such as respiratory or brain infections, or “food poisoning” for the digestive tract). Pathogens vary tremendously in their survival strategies.

Some pathogens can survive only when they are in a host, whereas others can persist

for long periods of time outside a host. Some pathogens live exclusively within a

single host species, whereas others can infect multiple species or may even depend

on different species at different points in their life cycle.

Human Behavior and the Spread of Infectious Disease

Human behavior is one of the critical factors in the spread of infectious disease.

Actions we take every day influence our exposure to infectious agents and determine which of them may or may not be able to enter our bodies and cause an illness.

Food preparation practices, sanitary habits, sex practices, whether one spends time in

proximity to large numbers of adults or children—all these can influence a person’s

chances of contracting an infectious disease. Another critical factor that influences

susceptibility to infectious disease is overall nutritional health and well-being. People

weakened by food shortage, starvation, or another disease (such as cancer) are especially vulnerable to infectious illness (Figure 15.11). For example, rates of tuberculosis in Britain started to decline in the nineteenth century before the bacteria that

caused it was identified or effective medical treatment was developed. This decline

was almost certainly due to improvements in nutrition and hygiene (McKeown, 1979).

Just as individual habits play a prominent role in the spread of infectious disease,

so can widespread cultural practices. Sharing a communion cup has been linked to

the spread of bacterial infection, as has the sharing of a water source for ritual washing before prayer in poor Muslim countries (Mascie-Taylor, 1993). Cultural biases

against homosexuality and the open discussion of sexuality gave shape to the entire

AIDS epidemic, from its initial appearance in gay communities to delays by leaders

in acknowledging the disease as a serious public health problem.

Agriculture Agricultural populations are not necessarily more vulnerable to infectious disease than hunter–gatherer populations. However, larger and denser agricultural populations are likely to play host to all the diseases that affect hunter–gatherer

populations and others that can be maintained only in larger populations. This is the

basis of the first epidemiological transition discussed earlier. For example, when a

child is exposed to measles, his or her immune system takes about 2 weeks to develop

effective antibodies to fight the disease. This means that in order to be maintained in

a population, the measles virus needs to find a new host every 2 weeks; in other words,

there must be a pool of twenty-six new children available over the course of a year to

host the measles virus. This is possible in a large agricultural population but almost

impossible in a much smaller hunter–gatherer population (Figure 15.12).

Agriculture and nonagricultural populations also differ in that the former tend

to be sedentary, whereas the latter tend to be nomadic. Large, sedentary agricultural

populations therefore are more susceptible to bacterial and parasitic worm diseases

that are transmitted by contact with human waste products. In addition, many diseases

are carried by water, and agricultural populations are far more dependent on a limited

number of water sources than nonagricultural populations. Finally, agricultural populations often have domestic animals and also play host to a variety of commensal animals, such as rats, all of which are potential carriers of diseases that may affect humans.

Specific agricultural practices may change the environment and encourage the

spread of such infectious diseases as sickle cell and malaria. Slash-and-burn agriculture

leads to more open forests and standing pools of stagnant water. Such pools are an

ideal breeding ground for the mosquitoes that carry the protozoa that cause malaria.

Mobility and Migration The human species is characterized by its mobility. One

price of this mobility has been the transmission of infectious agents from one population to another, leading to uncontrolled outbreaks of disease in the populations

that have never been exposed to the newly introduced diseases. These are referred

to as virgin soil epidemics.

The Black Death in Europe (1348–1350) is one example of just such an outbreak (Figure 15.13). The “Black Death” was bubonic plague, a disease caused by

the bacterium Yersinia pestis. The bacterium is transmitted by the rat flea, which lives

on rats. When the fleas run out of rodent hosts, they move to other mammals, such

as humans. The bacteria can quickly overwhelm the body, causing swollen lymph

nodes (or buboes, hence the name) and in more severe cases lead to infection of the

respiratory system and blood. It can kill very quickly. An outbreak of bubonic plague

was recorded in China in the 1330s, and by the late 1340s it had reached Europe.

In a single Italian city, Florence, a contemporary report placed the number dying between March and October 1348 at 96,000. By the end of the epidemic, one-third

of Europeans (25–40 million) had been killed, and the economic and cultural life of

Europe was forever changed.

Similar devastation awaited the native peoples of the New World after

1492 with the arrival of European explorers and colonists. Measles, smallpox,

influenza, whooping cough, and sexually transmitted diseases exacted a huge toll

on native populations throughout North and South America, the Island Pacific,

and Australia. Some populations were completely wiped out, and others had

such severe and rapid population depletion that their cultures were destroyed.

In North America, for example, many communities of native peoples lost up

to 90% of their population through the introduction of European diseases

( Pritzker, 2000). Infectious diseases often reached native communities before

the explorers or colonizers did, giving the impression that North America was an

open and pristine land waiting to be filled.

Infectious Disease and the Evolutionary Arms Race

As a species, we fight infectious diseases in many ways. However, no matter what we

do, parasites and pathogens continuously evolve to overcome our defenses. Over

the last 50 years, it appeared that medical science was gaining the upper hand on

infectious disease, at least in developed countries. However, despite real advances,

infectious diseases such as the virus that causes AIDS and antibiotic-resistant bacteria

remind us that this primeval struggle will continue.

The Immune System One of the most extraordinary biological systems that has

ever evolved is the vertebrate immune system, the main line of defense in the fight

against infectious disease. At its heart is the ability to distinguish self from nonself. The

immune system identifies foreign substances, or antigens, in the body and synthesizes

antibodies, which comprise a class of proteins known as immunoglobulins, which are

specifically designed to bind to and destroy specific antigens (Figure 15.14).

The immune system is a complex mechanism that has evolved to deal

with the countless number of potential antigens in the environment. An

example of what happens when just one of the components of the immune

system is not functioning occurs in AIDS. The human immunodeficiency virus

(HIV) that causes AIDS attacks the helper T cells. As mentioned earlier,

the helper T cells respond to antigens by inducing the B lymphocytes to

produce antibodies, leading to the production of phagocytes; when their

function is compromised, the function of the entire immune system is also

compromised. This leaves a person with HIV infection vulnerable to a host

of opportunistic infections, a condition that characterizes the development

of full-blown AIDS.

Cultural and Behavioral Interventions Although the immune

system does a remarkable job fighting infectious disease, it is obviously

not always enough. Even before the basis of infectious diseases was

understood, humans took steps to limit their transmission. Throughout

the Old World, people with leprosy were shunned and forced to live apart

from the bulk of the population. This isolation amounted to quarantine,

in recognition of the contagious nature of their condition.

One of the most effective biocultural measures developed to fight infectious diseases is vaccination. The elimination of smallpox as a scourge of

humanity is one of the great triumphs of widespread vaccination. Smallpox

is a viral illness that originated in Africa some 12,000 years ago and subsequently spread throughout the Old World (Barquet & Domingo, 1997).

It was a disfiguring illness, causing pustulant lesions on the skin, and it

was often fatal. Smallpox killed millions of people upon its introduction

to the New World; in the Old World, smallpox epidemics periodically decimated entire populations. In 180 CE, a smallpox epidemic killed between 3.5 and 7 million

people in the Roman Empire, precipitating the first period of its decline. Crude vaccination practices against smallpox were developed hundreds of years ago, but these often carried a significant risk of developing the disease. During the twentieth century,

modern vaccination methods worked to virtually eliminate this disease (Figure 15.15).

The most recently developed forms of intervention against infectious disease are

drug based. The long-term success of these drugs will depend on the inability of the

infectious agents to evolve resistance to their effects. Overuse of anti-infectious drugs

may actually hasten the evolution of resistant forms by intensifying the selection

pressures on pathogens.

Evolutionary Adaptations The immune system is the supreme evolutionary

adaptation in the fight against infectious disease. However, specific adaptations to

disease that do not involve the immune system are also quite common ( Jackson,

2000). For example, a class of enzymes known as lysozymes attacks the cell wall

structure of some bacteria. Lysozymes are found in high concentrations in the tear

ducts, salivary glands, and other sites of bacterial invasion.

The sickle cell allele has spread in some populations because it functions as an

adaptation against malaria. Another adaptation to malaria is the Duffy blood group

(see Chapter 6). In Duffy-positive individuals, the proteins Fya and Fyb are found on

the surface of red blood cells. These proteins facilitate entry of the malaria-causing

protozoan Plasmodium vivax. Duffy-negative individuals do not have Fya and Fyb on

the surface of their red blood cells, so people with this phenotype are resistant to

vivax malaria. Many Duffy-negative people are found in parts of Africa where malaria

is common; others, who live elsewhere, have African ancestry

Diet and Disease

It seems that there are always conflicting reports on what particular parts of our diet

are good or bad for us. Carbohydrates are good one year and bad the next. Fats go in

and out of fashion. From a biocultural anthropological perspective, American attitudes toward diet and health at the turn of the twenty-first century provide a rich source of

material for analysis. However, despite all the confusion about diet, we all have the same

basic nutritional needs. We need energy (measured in calories or kilojoules) for body

maintenance, growth, and metabolism. Carbohydrates, fat, and proteins are all sources

of energy. We especially need protein for tissue growth and repair. In addition to energy,

fat provides us with essential fatty acids important for building and supporting nerve

tissue. We need vitamins, which are basically organic molecules that our bodies cannot

synthesize but that we need in small quantities for a variety of metabolic processes. We

also need a certain quantity of inorganic elements, such as iron and zinc. For example,

with insufficient iron, the ability of red blood cells to transport oxygen is compromised,

leading to anemia. Finally, we all need water to survive.

The Paleolithic Diet

For most of human history, people lived in small groups and subsisted on wild foods

that they could collect by hunting or gathering. Obviously, diets varied in different

areas: Sub-Saharan Africans were not eating the same thing as Native Americans

on the northwest Pacific coast. Nonetheless, S. Boyd Eaton and Melvin Konner

(Eaton & Konner, 1985; Eaton et al., 1999) argue that we can reconstruct an average

Paleolithic diet from a wide range of information derived from paleoanthropology,

epidemiology, and nutritional studies. A comparison of the average Paleolithic and

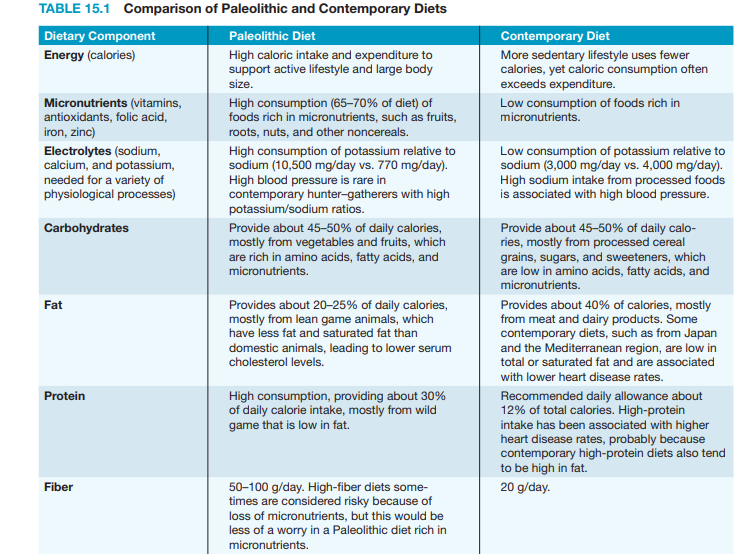

contemporary diets is presented in Table 15.1 (Eaton et al., 1999).

The contemporary diet is not simply a more abundant version of the hunter–

gatherer diet. It differs fundamentally in both composition and quality. Compared

with contemporary diets, the hunter–gatherer diet can be characterized as being

high in micronutrients, protein, fiber, and potassium and low in fat and sodium.

Total caloric and carbohydrate intake is about the same in both diets, but hunter–

gatherers typically were more active than contemporary peoples and thus needed

more calories, and their carbohydrates came from fruits and vegetables rather than

processed cereals and refined sugars.

The comparison between hunter–gatherer and contemporary diets indicates that

increasing numbers of people are living in nutritional environments for which their

bodies are not necessarily well adapted. With few exceptions (such as the evolution

of lactose tolerance) there has not been enough time, or strong enough selection

pressures, for us to develop adaptations to this new nutritional environment. Indeed,

because most of the negative health aspects of contemporary diets (obesity, diabetes,

cancer) become critical only later in life, it is likely that health problems associated

with the mismatch between our bodies and our nutritional environment will be with

us for some time.

Agriculture and Nutritional Deficiency

Agriculture allowed the establishment of large population centers, which in turn

led to the development of large-scale, stratified civilizations with role specialization.

Agriculture also produced an essential paradox: From a nutritional standpoint,

most agricultural people led lives that were inferior to the lives of hunter–gatherers.

Agricultural peoples often suffered from nutritional stress as dependence on a few

crops made their large populations vulnerable to both chronic nutritional shortages

and occasional famines. The “success” of agricultural peoples relative to hunter–

gatherers came about not because agriculturalists lived longer or better lives but because there were more of them.

With their dependence on a single staple cereal food, agricultural populations

throughout the world have been plagued by diseases associated with specific nutritional deficiencies. As in the Illinois Valley, many populations of the New World were

dependent on maize as a staple food crop. Dependence on maize is associated with

the development of pellagra, a disease caused by a deficiency of the B vitamin niacin

in the diet. Pellagra causes a distinctive rash, diarrhea, and mental disturbances, including dementia. Ground corn is low in niacin and in the amino acid tryptophan,

which the body can use to synthesize niacin. Even into the twentieth century, poor

sharecroppers in the southern United States and poor farmers in southern Europe,

both groups that consumed large quantities of cornmeal in their diets, were commonly afflicted with pellagra. Some maize-dependent groups in Central and South

America were not so strongly affected by pellagra because they processed the corn

with an alkali (lye, lime, ash) that released niacin from the hull of the corn.

In Asia, rice has been the staple food crop for at least the last 6,000 years. In

China, a disease we now call beriberi was first described in 2,697 BCE. Although it was

not recognized at that time, beriberi is caused by a deficiency in vitamin B1 or thiamine. Beriberi is characterized by fatigue, drowsiness, and nausea, leading to a variety

of more serious complications related to problems with the nervous system (especially

tingling, burning, and numbness in the extremities) and ultimately heart failure. Rice

is not lacking in vitamins; however, white rice, which has been polished and milled to

remove the hull, has been stripped of most of its vitamin content, including thiamine.

Agriculture and Abundance: Thrifty and Nonthrifty Genotypes

The advent of agriculture ushered in a long era of nutritional deficiency for most

people. However, the recent agricultural period, as exemplified in the developed

nations of the early twenty-first century, is one of nutritional excess, especially in terms of the consumption of fat and carbohydrates of little nutritional value

other than calories. The amount and variety of foods available to people in

contemporary societies are unparalleled in human history.

In 1962, geneticist James Neel introduced the idea of a thrifty genotype, a

genotype that is very efficient at storing food in the body in the form of fat,

after observing that many non-Western populations that had recently adopted

a Western or modern diet were much more likely than Western populations

to have high rates of obesity, diabetes (especially Type 2 or non–insulindependent diabetes), and all the health problems associated with those conditions (see also Neel, 1982). Populations such as the Pima-Papago Indians in

the southwest United States have diabetes rates of about 50%, and elevated

rates of diabetes have been observed in Pacific Island-, Asian-, and Africanderived populations with largely Western diets (Figure 15.16).

According to Neel, hunter–gatherers needed a thrifty genotype to adapt

to their nonabundant nutritional environments; in contrast, the thrifty

genotype had been selected against in the supposedly abundant European

environment through the negative consequences of diabetes and obesity. The

history of agriculture and nutritional availability in Europe makes the evolution of a nonthrifty genotype unlikely (Allen & Cheer, 1996); Europe was no

more nutritionally favored than other agricultural or hunter–gatherer populations.

However, the notion of a thrifty genotype retains validity. At its heart is the idea that

we are adapted to a lifestyle and nutritional environment far different from those we

find in contemporary populations.

We have seen how growth patterns, infectious disease exposure, nutritional

status, and a host of other health-related issues are fundamentally changed by the

adoption of new cultural practices and technologies. Conditions such as rickets and

diabetes have been called diseases of civilization because they seem to be a direct

result of the development of the industrialized urban landscape and food production. But this label is misleading. Rickets could also be called a disease of migration

and maladaptation to a specific environment. Diabetes could be characterized as a

disease of nutritional abundance, which was certainly not a characteristic of civilization for most of human history.

Biomedical anthropology is interested in understanding the patterns of human

variation, adaptation, and evolution as they relate to health issues. This entails an

investigation of the relationship between our biologies and the environments we live

in. Understanding environmental transitions helps us understand not only the development of disease but also the mechanisms of adaptation that have evolved over

thousands of years of evolution. Change is the norm in the modern world. In the

future, we should expect human health to be affected by these changes. By their

training and interests, biological anthropologists will be in an ideal position to make

an important contribution to understanding the dynamic biocultural factors that

influence human health and illness.

Forensic Anthropology Life, Death and Skeleton

The field of forensic anthropology has achieved recent popularity due in part to

television shows such as CSI and Bones. But like most popularizations, the fantasy is

more glamorous than the reality. Each state has medical examiners or coroners who

are legally responsible for signing death certificates and determining the cause and

manner of death of people not in the care of a doctor. They also have the authority

to consult other experts in their investigations, including forensic anthropologists.

A forensic anthropologist is often consulted in cases in which soft-tissue remains are

absent or badly decomposed.

Forensic anthropologists work with clues about growth, health, disease and

adaptation that are visible in each of our skeletons. These scientists are specialists in human osteology who use the theory and method of

biological anthropology to answer questions about how

recent humans lived and died. They study skeletal remains from crime scenes, war zones, and mass disasters

within the very recent past to reveal the life history of the

individual, to identify that individual, and to understand

something about the context in which death occurred.

They use osteological identification and archaeological field methods to retrieve remains and to develop a

profile of the age, sex, and other biological attributes of

an individual. Because the way the skeleton of a human

or any other animal looks is dictated by its function in life

and its evolutionary history, forensic anthropologists can

reconstruct the probable age, sex, and ancestry of an individual from his or her skeletal remains. They can observe

the influence of certain kinds of diseases on the skeleton,

and they can assess some aspects of what happened to an

individual just before, around the time of, and after his

or her death. Unlike pathologists and medical examiners,

forensic anthropologists bring an anthropological perspective and a hard-tissue

focus to investigations of skeletal remains.

Field Recovery and Laboratory Processing

Forensic investigations rely on good contextual information. Things like body position, relationship to nearby items such as bullets or grave goods, and structures near

the individual require precise and thorough documentation in the field. Without

such documentation, we would not know if the bullet recovered at the scene was

10 feet from the individual, or within the victim’s chest cavity. These associations are

crucial for inferring the meaning of a burial and the circumstances surrounding the

death of an individual. So to ensure full recovery and good contextual information,

we rely on archaeological techniques to find, document, and remove remains from

the site. When the site is identified it is cordoned off and surveyed for additional

remains. Such surveys commonly include an individual or team of investigators walking a systematic path searching for remains, associated items, or evidence of burial

(Figure 15.17).

In the field, any surface discoveries are mapped and photographed. A permanent datum point for the site is established that represents a fixed position from

which everything is measured so that the precise “find spot” of each object can be

relocated in the future. If the remains are buried, the anthropologist will excavate

using archaeological techniques. The excavator begins by skimming off shallow layers of dirt using a hand trowel. Objects are revealed in place and their coordinates,

including their depth, are recorded relative to the grid system (Figure 15.18), and

photographs are taken. The dirt is sieved through fine mesh to ensure that even

the smallest pieces of bone are recovered (Figure 15.19). Soil samples may be saved

to assist in the identification of insects and plants. In the field, the anthropologist

makes a preliminary determination of whether the remains are human or nonhuman (they could be those of a dog or deer, for instance) and, based on the bones,

whether more than one individual is present. Once exposed and mapped, individual

bones are tagged, bagged, and removed to the laboratory.

In the lab more detailed curation and examination can begin. A strict

chain of custody is established to ensure that the remains cannot be tampered with,

in case they should become evidence in a court of law. Detailed notes are taken to

demonstrate that the remains in question are those from the scene, that they have

not been contaminated or modified since their removal from the scene, and who has

had access to them.

After the remains are cataloged, they are cleaned of any adhering soft tissue and dirt, and then laid out in anatomical position, the

way they would have looked in the skeleton in life (Figure 15.20).

An inventory is made of each bone present and its condition. Most

adult humans have 206 bones, many of which are extremely small

(see Appendix A). Because most bones develop as several bony centers that fuse together only later in life, fetuses and children contain

many more bones than do adults. Often the scientist works with no

more than a few bone fragments.

The Biological Profile

Once the initial inventory has been completed, the scientist sets

about evaluating the clues that the skeleton reveals about the

life and death of the individual. The first step in this process is

constructing the biological profile of the individual— including

determining age, sex, height, and disease status.

Age at Death

As the human body develops, from fetus to old age, dramatic changes occur throughout the skeleton. Scientists use the more systematic of these changes to estimate the

age at death of an individual. However, whenever scientists determine age, they

always report it as a range (such as 35–45 years) rather than as a single definitive

number. This range reflects the variation in growth and aging seen in individuals

and across human populations and denotes the person’s biological rather than

chronological age (age in years). The goal is that the range also encompasses the

person’s actual age at the time of their death.

Because the skeleton grows rapidly during childhood, assessing the age of a

subadult younger than about 18 years of age is easier and often more precise than

estimating the age of an adult skeleton. Virtually all skeletal systems except the small bones of the ear (the ear ossicles) change from newborn to adult. For example,

in small children the degree of closure of the cranial bones (covering the fontanelles, or “soft spots” of the skull) changes with age, as does the development of the

temporal bone, the size and shape of the wrist bones, and virtually every other bone

(Figure 15.21). However, dental eruption and the growth of long bones are the most

frequently used means of assessing subadult age.

Humans have two sets of teeth of different sizes that erupt at fairly predictable

intervals. Which teeth are present can help distinguish between children of different

ages and between older subadults and adults of the same size (Figure 15.22). For

more precise ages, the relative development of the tooth roots can also be used.

However, once most of the adult teeth have erupted, by about the age of 12 years in

humans, the teeth are no longer as good a guide to predicting age.

In these older children, growth of the limb bones can also be used to assess

age. The long bones of the arms and legs have characteristic bony growths at

each end—the epiphyses—which are present as separate bones while the person

is still growing rapidly (Figure 15.23). Most epiphyses are not present at birth—

which helps to separate fetuses from newborns—but appear during infancy and

childhood. The lengths and proportions of bones change in predictable ways as

children grow and are especially good indicators for assessing fetal age ( Sherwood

et al., 2000). In older children, the epiphyses start to fuse to the shafts of the

limb bones around the age of 10 in some bones, and fusion of most epiphyses is

completed in the late teenage years. However, the process of fusion may occur as

late as the early twenties in a few bones (such as the clavicle). Depending upon

which bones and which parts of those bones are fused, a reasonably good estimate

of subadult age can be made.

In adults, age is harder to determine because growth is essentially complete.

Some of the last epiphyses to fuse, such as the clavicle and top of the ilium, can be

used to estimate age in young adults in their early twenties. But estimating the age

of the older adult skeleton relies mostly on degeneration of parts of the skeleton.

For example, the pubic symphysis and auricular surface of the innominate, and

the end of the fourth rib near the sternum all show predictable changes with age

(Todd, 1920, 1921; McKern & Stewart, 1957; Iscan et al., 1984; Lovejoy et al., 1985).

Examination of as many of these bones as possible helps to increase age accuracy(Bedford et al., 1993). The pubic symphysis is a particularly useful indicator of

adult age, and age standards have been developed separately for males and females (Gilbert & McKern, 1973; Katz & Suchey, 1986; Brooks & Suchey, 1990).

The standards show how the symphysis develops from cleanly furrowed to more

granular and degenerated over time (Figure 15.24). These changes tend to occur

more quickly in females than in males due to the trauma the symphysis experiences during childbirth.

The degree of obliteration of cranial sutures (the junction of the different

skull bones) can also give a relative sense of age—obliteration tends to occur in

older individuals (Lovejoy & Meindl, 1985). The antero–lateral sutures of the skull

are the best for these purposes. However, the correlation between degree of obliteration and age is not very close, and the age ranges that can be estimated are wide.

Sex

If certain parts of the skeleton are preserved, identifying biological sex is easier

than estimating age at death, at least for adults. The two parts of the skeleton that

most readily reveal sex are the pelvis and the skull, and sex characteristics are

more prominent in an adult skeleton than in a child. Humans are moderately sexually dimorphic, with males being larger on average than females (see Table 10.2

on page 258). But their ranges of variation overlap so that size alone cannot separate male and female humans.

The best skeletal indicator of sex is the pelvis. Because of selective pressures

for bipedality and childbirth, human females have evolved pelves that provide a relatively large birth canal (see Chapter 10). This affects the shape of the innominate

and sacrum in females; the pubis is longer, the sacrum is broader and shorter, and

the sciatic notch of the ilium is broader in females than in males (Figure 15.25). The

method is highly accurate (Rogers & Saunders, 1993) because the pelvis reflects directly

the different selective pressures that act on male versus female bipeds. Thus the pelvis

is considered a primary indicator of the sex of the individual. And because the femur

has to angle inward from this wider female pelvis to the knee (to keep the biped’s foot

under its center of gravity; see Chapter 10), the size and shape of the femur also differentiate males and females fairly well (Porter, 1995).

The skull is also a useful indicator of sex, at least in adults. Around puberty, circulating hormones lead to so-called secondary sex characters such as distribution of

body and facial hair. During this time male and female skulls also diverge in shape.

Male skulls are more robust on average than female skulls of the same population.

However, these differences are relative and population dependent; some human

populations are more gracile than others. The mastoid process of the temporal bone

and the muscle markings of the occipital bone tend to be larger in males than in

females, and the chin is squarer in males than in females (Figure 15.25). The browridge is less robust and the orbital rim is sharper in females than in males, and the

female frontal (forehead) is more vertical. These differences form a continuum and

provide successful sex estimates in perhaps 80 to 85% of cases when the population

is known.

Ancestry

Knowing the ancestry of an individual skeleton is important for improving the

accuracy of sex, age, and stature estimates. There is no biological reality to the idea

of fixed biological races in humans (see Chapter 6), but we have learned that the

geographic conditions in which our ancestors evolved influence the anatomy of

their descendants. The term ancestry takes into account the place of geographic

origin, which corresponds to biological realities in ways that the term race does not.

Nonetheless, because of the way in which variation is distributed in humans (there

is more variation within than between groups, and many variation clines run in

directions independent of one another) assessing ancestry from the skeleton is less

accurate than assessing age or sex, and the process is also highly dependent on the

comparativegroups used.

Forensic anthropologists base ancestry assessments on comparisons with

skeletal populations of known ancestry. An isolated skull can be measured and

compared using multivariate statistics with the University of Tennessee Forensic

Data Bank of measurements from crania of known ancestry. This process provides

a likely assignment of ancestry and a range of possible error. However, human

variation is such that many people exist in every population whose skulls do not

match well with most other skulls of similar geographic origin. Nonetheless, the

ability to even partially assign ancestry can be useful in several forensic contexts.

Missing person reports often provide an identification of ancestry, and although

this is not based directly on the skeleton, a skeletal determination of ancestry may

suggest a match that could be confirmed by other more time–consuming means

such as dental record comparisons or DNA analysis (see Innovations: Ancestry and

Identity Genetics on pages 410–411).

Innovations On Ancestroy and Genetics

Genetic studies have long

been used for

tracing the histories of

populations (Chapters

6 and 13). As geneticists have discovered

an increasing variety

of markers that are associated with specific

geographical regions

and populations, the

ability to trace individual genetic histories

has increased greatly,

and the ability to make

direct matches to DNA from a crime scene has become an

important forensic technique. In addition, the development

of technologies allowing direct sequencing of DNA regions

quickly and relatively inexpensively means that anyone

can obtain a genetic profile in a matter of a few weeks.

There are two basic approaches to determining personalized genetic histories (PGHs) (Shriver and Kittles,

2004). The first one is the lineage-based approach. These

are based on the maternally inherited mtDNA genomes

and the paternally inherited Y chromosome DNA. The

lineage-based approach has been very useful for population studies, and allows individuals to trace their ultimate

maternal and paternal origins. For example, African American individuals can find out what part of Africa their founding American ancestors may have come from (http://www

.african-ancestry.com). These are the same techniques

that have been used to consider the dispersal and migration of ancient and recent peoples. For example, in a survey of more than 2,000 men from Asia using more than 32 genetic markers, Tatiana Zerjal and her colleagues (2003)

found a Y chromosome lineage that exhibited an unusual

pattern thought to represent the expansion of the Mongol

Empire. They called this haplotype the star cluster (reflecting the emergence of these similar variants from a common source). The star cluster lineage is found in sixteen

different populations, distributed across Asia from the Pacific Ocean to the Caspian Sea. The MRCA (most recent

common ancestor) for this cluster was dated to about 1,000

years ago, and the distribution of populations in which the

lineage is found corresponds roughly to the maximum extent of the Mongol Empire. The Empire reached its peak

under Genghis Khan (c. 1162–1227) and Khan and his close

male relatives are said to have fathered many children

(thousands, according to some historical sources).

One additional population outside the Mongol Empire

also has a high frequency of the star cluster: the Hazaras

of Pakistan (and Afghanistan), many of whom through oral

tradition consider themselves to be direct male-line descendants of Genghis Khan. The star cluster is absent from other

Pakistani populations. The distribution of the star cluster

could have resulted from the migration of a group of Mongols

carrying the haplotype or may even reflect the Y chromosome carried specifically by Genghis Khan and his relatives.

From the perspective of determining an individual’s

PGH, however, the lineage-based approach is limited because it traces only the origins of a very small portion of an

individual’s genome and does not reflect the vast bulk of a

person’s genetic history. In contrast to the lineage-based

approach, autosomal marker-based tests use information

from throughout the genome. Ancestry informative markers

(AIMs) are alleles on the autosomal chromosomes that show

substantial variation among different populations. The more

AIMs that are examined in an individual, the more complete

the picture of that individual’s biogeographical ancestry can be obtained (Shriver and Kittles,

2004). Combining the information from all of these AIMs requires some major statistical

analysis, which has to take into

account the expression of each

marker and its population associations. There will be some

statistical noise in the system

due to factors such as the overlapping population distribution

of the markers and instances of

convergent evolution. In addition, even when a hundred markers are used, the tests sample only a small portion of your genome that is the product

of the combined efforts of thousands of ancestors. The biogeographical ancestry of a person, expressed in terms of percentage affiliations with different populations, is a statistical

statement, not a direct rendering of a person’s ancestry. And

both AIMs and lineage-based tests are limited by the comparative samples that form the basis of our knowledge about

the distribution of DNA markers. Thus, if you submit a cheek

swab to several different companies with different comparative databases, you will get somewhat different ancestry results. Nonetheless, they provide us with an intriguing

snapshot of the geographic origins of a person’s ancestors.

Several commercial companies are now in the ancestry

genetics business. We contacted one of these companies,

DNAPrint Genomics (http://www.-AncestryByDNA.com),

and obtained the biogeographical ancestry of two of the

authors of this text, Craig Stanford (CS) and John S. Allen

(JSA). The genetic testing product used is called AncestryByDNA 2.5, which provides a breakdown of an individual’s

PGH in terms of affiliations with four major geographical

groups: European, Native (aka Indigenous) American, SubSaharan African, and East Asian. It combines information

derived from about 175 AIMs.

John Allen’s results were: 46% European, 46% East

Asian, 8% Native American, and 0% Sub-Saharan African.

These results squared quite well with his known family history: His mother was Japanese and his father was an American of English and Scandinavian descent. The 8% Native

American could have come from one or more ancestors on

his father’s side (some of whom arrived in the United States

in the early colonial period). However, the 95% confidence intervals of the test indicate that

for people of predominantly

European ancestry, a threshold

of 10% Native American needs

to be reached before the result

is statistically significant. For

people of predominantly East

Asian descent, the threshold is 12.5%. Therefore, in the

absence of a family history of

Native American ancestry, it

is best to consider the 8% as

statistical noise.

Craig Stanford’s results were: 82% European, 14%

Native American, 4% Sub-Saharan African, and 0% East

Asian. The Native American result, which easily exceeds

the statistical threshold, was a real surprise because CS

has no family history of Native American ancestry. Following this result, his father was tested and was found to have

91% European and 9% Sub-Saharan African ancestry. Thus,

all of CS’s Native American ancestry was derived from his

mother’s side. Although she was not tested, it is reasonable

to conclude that her Native American percentage would

be greater than 25%—the equivalent of a grandparent, although this does not have to represent the contribution of

a single individual. CS found this result to be somewhat

ironic because earlier generations of women on his mother’s

side of the family had been proud members of the Daughters of the American Revolution, a lineage-based organization that was once (but is no longer) racially exclusionary.

Stanford also requested a more detailed European ancestry

genetic test (EuroDNA 1.0). Along with European ancestry,

the tests showed 12% Middle Eastern ancestry. One of his

paternal grandparents was from Italy, and the ancestry of

southern Europeans often reflects population movements

around the Mediterranean Sea, including Middle Eastern

markers. In addition, there has been a long history of some

gene flow from Sub-Saharan Africa into North Africa and

the Middle East, which could explain his father’s statistically significant Sub-Saharan African ancestry.

Ancestry genetics opens windows to the past, but in

some cases, it raises more questions than answers about

where you came from. This is not surprising because we

know that the pattern of genetic variation across all humans is a complex one that does not partition well into regional or “racial” groups, that most of the genetic variation

within humans exists within rather than between groups,

and that different characteristics often follow cross-cutting

clines. We can attest, however, that for anyone interested

in their own biological ancestry, getting a personalized

genetic history can be an exciting experience. Incidentally,

humans are not the only species whose biological past

can be explored: Genetic ancestry testing for dogs is also

becoming available (http://www.whatsmydog.com) and

paternity testing is available for both cats and dogs (http://

www.catdna.org; http://www.akc.org/dna).

Height and Weight

Physical stature reflects the length of the bones that contribute to a person’s height.

Different body shapes have evolved in response to different climatic pressures (see

the ecological rules described by Bergmann and Allen in Chapter 6). Thus, height

and weight estimates will be more accurate if the population of the individual is

known. These differences in proportions relate to differences in bone lengths; as

a result, some populations will tend to have more of their stature explained by leg

length, and some by torso length, for example.

The best estimates of stature from the skeleton are based on summing the

heights of all the bones in the skeleton that contribute to overall height including the cranium, vertebral column, limb, and foot bones (Fully, 1956). This socalled Fully method is fairly accurate, but requires a complete skeleton, a rarity in

archaeological or forensic contexts. Biological anthropologists have developed formulae, which vary by population, for estimating stature based on the length of a

single or several long bones, so that the femur, tibia, or even humerus can be used to

predict stature. These methods use the relationship between the limb bones and the

height in skeletal remains of individuals of known stature to predict stature for an