By the end of the last Ice Age, humans

were living in most of the world’s

inhabitable places. They achieved a

global distribution without becoming

multiple species in the process, which

isn’t the way things usually h appen

in nature. They were able to do this

because they possessed extraordinary adaptive flexibility as biocultural

organisms. Without such flexibility,

humans might still be restricted to the

tropical and subtropical regions of the

Old World.

The archaeological record provides abundant evidence that the rate

of change in human lifeways accelerated markedly during the past 10,000

years or so. Throughout this long period, human culture accounted for more

and more of the changes in how and

where our ancestors lived. By making

cultural choices and devising new cultural solutions to age-old problems,

human groups succeeded in mitigating some of the processes that operate in the natural world (for example,

starvation due to seasonal food shortages) and either turned them to their

advantage or at least lessened their

worst effects. Humans also discovered that the cost of such cultural solutions included consequences for both

the natural world and human biology.

Chapter 16 will explore these consequences; some were fantastically good;

others weren’t.

Two of the most profound and farreaching developments of later prehistory were the shift from hunting and

gathering to food production and the

emergence of the early civilizations.

This chapter deals with plant and animal domestication and the associated

spread of farming, both of them integral to the development of the first

civilizations, which we will examine

in Chapter 15. We’ll start by examining competing explanations for the origins of food production. This will give

you a sense of the diverse perspectives from which researchers investigate how and why farming developed

after the end of the last Ice Age. From

there, we’ll discuss the archaeological

evidence for the origins of food production in several regions around the

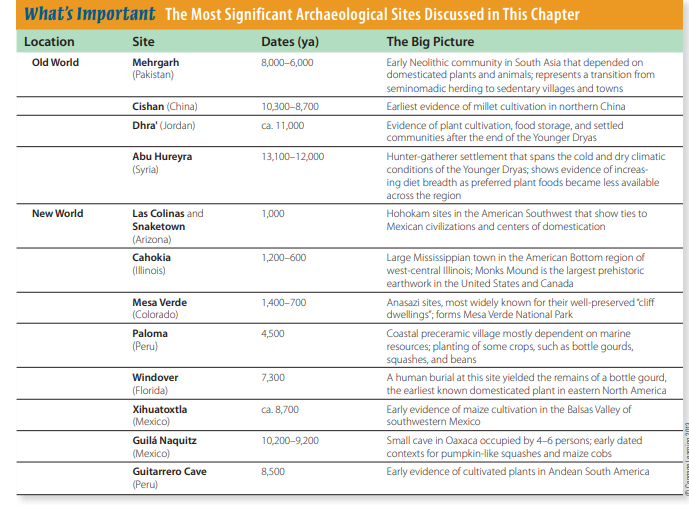

world (Fig. 14-1).

The Neolithic Revolution

The change from hunting and gathering to agriculture is often called the

Neolithic revolution, a name coined

decades ago by archaeologist V. Gordon

Childe (1951) to acknowledge the fundamental changes brought about by the

beginnings of food production. While

hunter-gatherers collected whatever

foods nature made available, farmers

employed nature to produce only those

crops and animals that humans selected

for their own exclusive purposes.

Beyond domestication and farming, Neolithic activities had other farreaching consequences, including new

settlement patterns, new technologies,

and significant biocultural effects. The

emergence of food production eventually transformed most human societies either directly or indirectly and, in

the process, brought about dramatic

changes in the natural realm as well.

The world has been a very different

place ever since humans began developing agriculture.

Childe argued that maintaining fields

and herds demanded a long-term commitment from early farmers. Obliged to

stay in one area to oversee their crops,

Neolithic people became more or less

settled, or sedentary. As storable harvests gradually supported larger and

more permanent communities, towns

and cities developed in a few areas.

Within these larger settlements, fewer

people were directly involved in food

production, and new craft specializations emerged, such as cloth weaving,

pottery production, and metallurgy.

While archaeologists still accept his

general characterization of the Neolithic

revolution, we’ve learned a lot in the

decades since Childe’s original study.

For example, we now recognize that

sedentism actually preceded farming in certain locations where permanent settlements were sustained solely

by gathering and hunting or fishing. In

Chapter 13, for example, we noted that

the Chumash of southern California

and the Natufian hunter-gatherers of

the Near East, among others, established

sizable villages. It’s now also widely accepted that sedentism could often

stimulate food production, rather than

the other way around, and that even

Upper Paleolithic hunter-gatherers were

perfectly capable of understanding how

to manipulate the life histories of plants

and animals to their advantage.

Archaeologists also now know that

Neolithic lifeways evolved independently in several places around the

world. They are less agreed on how

and why it spread from one region to

another (e.g., compare Barker, 2006, and

Bellwood, 2005). Some researchers see

most regional Neolithic developments

as the culmination of local cultural

sequences. Others argue for migrations

and the diffusion of agriculture from “heartlands” in which such cultural

changes first took place.

Regardless of how it spread, the

Neolithic is viewed as revolutionary

in its cumulative impact on human

lives, not in the amount of time it took

for these cultural changes to happen.

Measured in human terms, the transition from hunter-gatherer to farmer in

any region involved complex, often interrelated biocultural changes that likely

played out across as many as 150 generations (Fuller, 2010, p. 11).

In the following discussion, we’ll

examine the beginnings of domestication and farming by considering evidence drawn from around the world.

Although the process everywhere shared

many similarities and produced equally

dramatic consequences, archaeologists

working in the Americas rarely apply the

term Neolithic to studies of New World

farmers. Instead, they use regional terminology, such as Formative or Preclassic

in Mesoamerica and Mississippian in

eastern North America.

Explaining the Origins of Domestication and Agriculture

Archaeologists have always felt compelled to identify “firsts.” When did

the earliest humans arrive in Australia?

Where are the oldest sites in South

America? When did the bow and arrow

arrive in the Midwest? Concerns about

these firsts also dominate archaeological research on domestication and agriculture and will undoubtedly continue

to do so. But we’ll never know when

or where the first person intentionally

planted seeds in the hope of making a

crop, and we’ll never track down the

first person to hitch an animal to a plow

or milk a goat. What researchers really

hope to achieve by their emphasis on

firsts is to understand what made these

changes happen.

From research on the origins of agriculture, we know that ancient huntergatherers who lived before the earliest

identified archaeological evidence of

food production were both intelligent

and observant enough to figure out what

happens to seeds after you put them in

the ground. In fact, we have every reason

to believe that Mesolithic/Epipaleolithic

and Archaic hunter-gatherers had a

wealth of practical everyday knowledge

and understanding about the natural

world around them. So, when we search

for the earliest evidence of agriculture in

a region, the most important goal is not

being able to say, “Ah, here’s where we

draw the line on our chronology chart of

agricultural beginnings” (see, for example, Fig. 14-1). A much more fundamental

motivation is simply to understand why

these people became farmers. Why then?

Why there? What conditions brought

about these changes? Why these crops?

From the perspective of the modern

world, we may find it hard to accept that

earlier peoples didn’t generally aspire

to be farmers and that many no doubt

avoided the opportunity for as long as

possible. But the archaeological record

and history alike are filled with examples demonstrating that what we now

view as the self-evident benefits of food

production have seldom been seen in

the same light by hunter-gatherers. In

recent centuries, many of the remaining hunter-gatherers on every continent

resisted, sometimes successfully, the

efforts of societies based on food production to convert them to peaceful, taxpaying farmers, voters, and consumers

of mass-produced goods. Such attitudes

call into question the inevitability of

agriculture in biocultural evolution. Did

it become the predominant economic

basis of human communities because it

offered so many obvious advantages?

Because it offered the fewest disadvantages? Or because there just weren’t a lot

of alternatives?

Ironically, although farming didn’t

get started in a big way until later, it was

the hunter-gatherers of the Mesolithic/

Epipaleolithic and Archaic periods who

actually initiated the critical processes

and even developed many of the innovations we usually credit to the Neolithic.

Essentially, the lifestyles of some early

Holocene hunter-gatherers anticipated

many of the developments we associate

with agriculture. Neolithic farmers were

mostly the recipients of domesticated species and agricultural ways from their

predecessors.

Defining Agriculture and Domestication

To avoid confusion later on, it’s useful to consider the difference between

domestication and agriculture. These

terms are often found together in discussions of the beginnings of food production, but they mean different things

(Rindos, 1984).

Domestication is an evolutionary

process. When we say that a certain plant

or animal is domesticated, we mean that

there’s interdependency between this

organism and humans, such that part of

its life history depends on human intervention. To achieve and maintain this

relationship requires the genetic transformation of a wild species by selective

breeding or other ways of interfering,

intentionally or not, with a species’ natural life processes.

Agriculture differs from domestication because it’s a cultural activity, not

an evolutionary process. It involves the

propagation and exploitation of domesticated plants and animals by humans.

Agriculture in its broad sense includes

all the activities associated with both

farming and animal herding. Although

domestication and agriculture are typically examined together in archaeological discussions of Neolithic lifeways,

domestication isn’t inevitably associated with an economic emphasis on food

production. For example, cotton was

an early domesticated plant, but it was

grown for its fibers, not as human food.

True agriculture would be unthinkable without domesticated plants and

animals. Domestication makes agriculture possible, especially when we consider how humans have manipulated

the life history strategies of other organisms to maximize particular qualities, such as yield per unit area, growth

rate to maturity, ease of processing,

seed color, average seed size, and flavor. The cultural activity we call agriculture ensures that the plants and animals with these desirable qualities are

predictably available as human food

and raw materials. One useful way

to view this fundamental change in

the relationship between humans and

other animals and plants is as symbiosis, a mutually beneficial association

between members of different species

(Rindos, 1984).

Next we’ll briefly examine some

competing explanations for the beginnings of agriculture. Although most of

these approaches address the problem of

explaining the development of farming

in the Near East, it’s important to note

that their proponents tend to view them

as generally applicable to agricultural

origins everywhere. The Near East dominates the discussion mostly because

it received the lion’s share of research

on this problem over the past century,

not because it was some sort of primal

hearth for the development of agriculture. Also, as you review these competing explanations, bear in mind that

the central questions are open areas of

research. Right now, no single approach

is both sufficient and necessary to

explain all known cases.

Loosely following Verhoeven (2004),

our overview examines two approaches,

those that primarily invoke natural, or

environmental, factors to explain the

development of agriculture, and those

largely based on cultural (including cognitive) factors.

Environmental Approaches

Most approaches to explain the origins

of domesticated plants and animals and

the beginnings of agriculture identify

one or more natural mechanisms, such

as climate change or human population growth, that may have promoted

the biocultural changes documented in

the archaeological record. The reasoning

behind such hypotheses is that, when

faced with increasing resource needs,

a community typically has several

options. The least disruption to everyday life can be achieved by reducing the

population, extending the territory, or

making more intensive use of the environment. Farming, of course, represents

a more intensive use of the environment.

Through their efforts, farmers attempt

to increase the land’s carrying capacity

by harnessing more of its energy for the

production of crops or animals that will

feed people (Fig. 14-2).

In their most extreme form, environmental approaches call to mind

environmental determinism, the notion that certain cultural outcomes can be

predicted from—or are determined

by—a combination of purely environmental causes. For example, V. Gordon

Childe himself conjectured that climate

changes at the end of the Pleistocene

increased Europe’s rainfall while making southwestern Asia and North Africa

much more arid (Childe, 1929, 1934).

Humans, animals, and vegetation in the

drought areas concentrated into shrinking zones around a few permanent

water sources. At these oases, Childe

hypothesized, the interaction between

humans and certain plants and animals

resulted in domestication of species such

as wheat, barley, sheep, and goats, which

people then began to use to their advantage. The eventual result was the spread

of sedentary village communities across

the Near East.

Hypotheses based on any form

of determinism tend to be relatively

straightforward, which is both their

strength and their weakness. Because

they hold so many factors constant, it’s

easy to see how such approaches should

work and why certain important outcomes should arise. The main drawback

of such ideas is that their focus is typically too general to explain a given case

because they omit the key contextual

factors that are unique to a real event.

In environmental approaches, such factors are often history and culture. What

people are already familiar with and

what they and their ancestors did in the

past often, if not always, play a big role

in their decisions. So, for example, a desert region might simultaneously sustain

opportunistic hunter-gatherers, nomadic

pastoralists, farmers using special deepplanting procedures, and even lawnmowing suburbanites willing to pay for

piped-in water, not because these groups

are unaware of the possibilities posed by

alternative ways of living, but because

they are living their traditional ways of

life and they prefer them.

To return to what has come to be

called Childe’s oasis theory, its simplicity quickly enabled archaeologist

Robert Braidwood to demonstrate that

the predicted outcomes didn’t exist in

the archaeological record (see p. 347 for

details). Pollen and sediment profiles

now confirm that at least some of the climatic changes hypothesized by Childe

did occur in parts of the Near East prior

to Neolithic times, and so they may have

had a role in fostering new relationships

between humans and other species in

this marginal environment (Henry, 1989;

Wright, 1993). Even so, both the causes

and the apparent effects were complex.

In places like the Near East, climate

change that resulted in diminished or

redistributed resources didn’t directly

push people to become farmers (Munro,

2004), though it may have made farming one of the more reasonable options.

Furthermore, the arid conditions familiar to us today in some parts of the Near

East may be as much a result as a cause

of Neolithic activities in the region (see,

e.g., Nentwig, 2007). That is, the ecologically disruptive activities of farmers

and herd animals during the Neolithic

period may have contributed to the

destructive process of desertification.

Their plowed fields exposed soil to wind

erosion and evaporation, while the irrigation demands of their crops lowered

the water table and increased salinization of the soil. And overgrazing herbivores rapidly reduced the vegetation

that holds moisture and binds soil, thus

destroying the fragile margin between

grassland and desert.

One large group of environmental

hypotheses that, unlike Childe’s, continues to be examined by researchers looks

to increased competition for resources.

Whether the competition resulted from

natural increases in population density

or from climatic changes, such as rising sea levels, increased rainfall, or lower 345

average seasonal temperatures, they’re

seen as major factors that encouraged

the domestication of plants and animals and, ultimately, the beginnings of

agriculture (e.g., Boserup, 1965; Binford,

1968; Flannery, 1973; Cohen, 1977). These

explanations share the view that agriculture developed in societies where competition for the resources necessary to

sustain life favored increasing the diversity of staple foods in the diet. For one

reason or another, population control or

territorial expansion may not have been

feasible or desirable choices in these

societies. For example, competition may

have arisen from decreased human mortality rates rather than increased fertility or possibly even been driven by the

increasing proportion of people living

to an old age. The point is that people

faced a “prehistoric food crisis” (Cohen,

1977) unlike most modern cases because

it was a chronic problem that worsened

over decades and showed no sign of

ever getting any better. Concentrated in

a restricted territory or faced with the

dwindling reliability of once-favored

resources, such hunter-gatherers might

have taken up horticulture or herding to

enhance the productivity or distribution

of one or more particularly useful species. It was this economic commitment

that eventually led to the emergence of

true farmers.

Binford’s (1968) “packing model”

develops one such hypothesis involving demographic stresses. As modern climatic conditions became established in the early Holocene, people

resided in every prime habitat in the

temperate regions of Eurasia. Foraging

areas became confined as territories

filled, leading to increased competition for resources and a more varied diet. Forced to make more intensive use of smaller segments of

habitat, hunter-gatherers applied their

Mesolithic technology to a broader

range of plant and animal species. A

few of these resources proved more

reliable, easier to catch or process, tastier, or even faster to reproduce than

others, so they soon received greater

attention. Archaeological evidence of

such changes can be found on many

Mesolithic/Epipaleolithic sites in the

form of sickles, baskets and other

containers, grinding slabs, and other

processing tools.

As local populations continued to

grow and other groups tried to expand

their territory, their only choice would

be to move into the marginal habitats that lay at the edges of the optimal, resource-rich parts of their territory (Binford, 1968). Because population

stress would quickly reach critical levels in these marginal environments,

where resources were already sparse,

it was here that domesticated plants

were first developed. To feed itself, the

expanding population might have tried

to expand the native ranges of some

of the resources they knew from their

homeland by sowing seeds of the wild

plants. Over time, this activity resulted

in domestication of those species and

fundamental changes in the relationship

between them and the people who by

then depended on them.

Aspects of Binford’s approach

appealed to many archaeologists, who

agreed that the wild ancestors of some

of the world’s most important domesticates originally held a low status in the

diet. Many were small, hard seeds that

were once seldom used except as secondary or emergency foods. Ethnographic

research had also shown that, given their

choice, hunter-gatherers everywhere prefer to eat fruit and meat (Yudkin, 1969).

Still, grains and roots became increasingly important in the Mesolithic/

Epipaleolithic diet—supplemented by

available animal or fish protein—and

not only when the more desirable foods

were in short supply. An interpretation based on increased competition for

resources offered a testable explanation

for why this happened.

Although he took issue with certain aspects of Binford’s approach,

Flannery (1973) agreed with the basic

thesis because it explained why the earliest archaeological evidence of plant

domestication should be found in what

would have been marginal environments. Flannery described the increasing breadth of the Epipaleolithic diet

as a “broad spectrum revolution” in

which hunter-gatherers turned to many

kinds of food resources to make up for

local shortfalls. Especially in marginal environments, this activity promoted the

development of domesticates and, ultimately, the origins of true agriculture.

In short, environmental approaches

identify forces external to humans as

the active ingredients in the development of agriculture. In these hypotheses, human agency is primarily reactive.

Something in the natural environment

changes (for example, precipitation patterns, average annual temperature),

and it makes life increasingly hard for

hunter-gatherers. They react culturally to

changed circumstances in various ways,

some of which include incorporating a

wider range of less preferred foods in

their staple diet and colonizing marginal

environments. At some point, they take

up the alternative of applying cultural

means to increase the production of one

or more food species. So basically, these

approaches envision the development

of agriculture more as something that

humans backed into from a lack of better

alternatives rather than something they

enthusiastically embraced.

Cultural Approaches

Not everyone agrees that the roots of

domestication and agriculture are to be

explained by the operation of external

environmental factors, all of which, by

design, place human culture and agency

in a passive role. Some archaeologists

contend that social and ideological factors, such as competitive feasting to

enhance one’s status, tribute payments,

or offerings to the deities (Price and BarYosef, 2010), may have pushed societies

to come up with more food than could

be readily obtained on a regular basis

from natural sources. The reasoning

behind these hypotheses is that human

agency and culture alone may be sufficient and necessary to explain many of

the fundamental changes documented in

the archaeological record.

As you may suspect from your

reading of the previous section, these

approaches are also not immune to

extreme positions. Just as we can identify some environmental approaches as

teetering on the brink of determinism,

we can find some cultural and cognitive approaches that seek to emphasize

the role of human culture to the near

exclusion of noncultural factors. In these

approaches, such natural phenomena

as climatic changes are either irrelevant

to the explanation of cultural outcomes

or were consciously exploited by people to further cultural objectives, so they

weren’t merely phenomena to which

people reacted.

Robert Braidwood’s “nuclear zone”

or “hilly flanks” hypothesis (Braidwood and Howe, 1960) is a good midtwentieth-century example to start

with in looking at cultural approaches.

Braidwood built on V. Gordon Childe’s

earlier work (see p. 344) and pointed

out that subsequent research didn’t find

evidence of the environmental changes

on which Childe based his oasis theory.

What’s more, the wild ancestors of

common domesticated plants and animals in the Near East were in the foothills of the mountains, not around the

oases, which is where they should be if

Childe’s oasis theory is correct. Without

a clear environmental trigger for the

origins of domestication and agriculture, Braidwood and Howe (1960) reasoned that domestication and, ultimately, agriculture came about as early

Holocene hunter-gatherers gradually

became familiar with local plant and

animal resources and grew increasingly

inclined to the notion of domestication.

In other words, domestication and agriculture happened when “culture was

ready.” But Braidwood never adequately

addressed the compelling questions that

such an argument stimulates: Why was

culture “ready”? Why then and not, say,

30,000 ya? Or 100,000 ya? Or never?

In his examination of the beginnings of agriculture in Europe, Ian

Hodder (1990) took a more evenhanded

approach than Braidwood. Building

on the symbolic meaning assigned to

houses and household activities, Hodder

developed an argument in which the

process of domestication and the activities of agriculture were properly viewed

as the human “transformation of nature

into culture, with an expansion of cultural control and a domination of

nature” (Verhoeven, 2004, p. 210). He

identified both social and natural factors

as possible pressures in bringing about

the transition from foraging to agriculture at the end of the Pleistocene. The

strength of Hodder’s approach rests in

his assignment of considerable weight

both to human agency and culture and

to the widely accepted effects of environmental factors. Trevor Watkins

(2010) makes a broadly similar claim.

Watkins argues that the key factor in the

“readiness” of human culture was the emergence of “larger and more cohesive

social groups” during the Epipaleolithic,

which stimulated human cognitive

development and, ultimately, the development of agriculture.

So, to sum things up, cultural

approaches to explain the origins of

domestication and agriculture assume

an active role for human agency and

tend to discount, if not deny completely,

the importance of natural, or environmental, factors. In these approaches,

cultural changes, such as a transformation of the human relationship with the

divine or of the conceptualization of self,

can be enough in some cases to account

for the changes we see in the archaeological record. The main drawback with

these hypotheses is that sometimes it’s

not immediately clear why such transformations would occur.

From Collecting to Cultivating

If today we had to choose an explanation for the origins of domestication and

agriculture (and we should at least suggest a preference, since this is an introductory college textbook), we would

adopt one of the moderate environmental approaches as the most robust

because it explains the most real cases.

Most such approaches also consider cultural factors, but they assign the greatest weight to the forces of nature. For

us, that’s their greatest appeal. They

don’t require researchers to assume that

just because we’re biocultural animals,

humans are somehow exempt from natural factors that affect all living things.

Disasters such as the devastating tsunami of December 2004 that killed more

than 180,000 people in a dozen countries

are painful reminders that, for all our

human posturing to the contrary, nature

often has the final word.

Ultimately, we have no reason to

believe that the origins of domestication and agriculture can be explained

only by natural forces or only by cultural

factors. These are complex problems for

which there may be multiple valid explanations. It could easily be the case that

approaches such as those recently proposed by Barker (2006) and Verhoeven

(2004), which seek explanations in the

interaction of both natural and cultural

forces, will prove to be the most productive route to follow.

So, working within our admitted preference for environmental

approaches, let’s now consider why and

how hunter-gatherers became farmers

in a real example drawn from the Near

East. As Epipaleolithic gatherers in the

Levant region harvested natural stands

of wild cereal grasses such as wheat or

barley, their movements would cause

many of the ripened seed heads to shatter spontaneously, with considerable

loss of grain. Each time someone used

a gazelle-horn sickle to cut through a

stalk, some of the seeds would fall to the

ground. This normal process of seed dispersal is a function of the rachis, a short

connector linking each seed to the primary stalk (Fig. 14-3). While the embryonic seed develops, the rachis serves as

an umbilical that conveys the nutrients

to be stored and later used by the germinating seed. Once the seed reaches

its full development on the stalk, the

rachis normally becomes dry and brittle,

enabling the seed to break away easily.

Even without human interference,

wild cereal grasses tended to be particularly susceptible to natural genetic

modification (much more so than, say,

nut-bearing trees), since the plants grew

together in dense patches, were highly

polytypic, and were quick to reproduce.

In fact, a stand of wild grasses was like

an enormous genetic laboratory. The normal range of genetic variability among

the grasses included some plants with

slightly larger seeds and others with

tougher or more flexible rachis segments,

meaning that their seed heads would be

slightly less prone to shattering. As people worked through the stands, seeds

from these genetic variants would end

up in the gathering baskets slightly more

often. Later, as the gatherers carried their

baskets to camp, stored or processed the

grain, or moved from place to place, a

disproportionate number of the seeds

they dropped, defecated, or perhaps

even scattered purposely in likely growing areas would carry the flexible-rachis

allele. (The same thing happened with

the larger seeds preferred by the collectors.) As these genetic variants became

isolated from the general wild population, each subsequent harvest advanced the “selection” process in favor of the

same desirable traits.

Human manipulation became an evolutionary force in modifying the species, a process Darwin labeled “unconscious selection.” People didn’t have to

be aware of genetic principles to act as

effective agents of evolution. And where

desirable traits could be readily discerned—larger grain size, plumper seed

heads, earlier maturity, and so forth—

human choice would even more predictably and consistently favor the preferred

characteristics. The result within just a

few growing seasons might be a significant shift in allele frequencies—that is,

evolution—resulting from Darwinian

selection processes, in this case the result

of long-term pressure by gatherers, who

consistently selected for those traits that

improved the plant’s productivity and

quality (Rindos, 1984).

Of course, the rate of divergent evolution away from the wild ancestral forms

of a plant (or animal) species accelerates

as people continue to exercise control

by selecting for genetically based characteristics they find desirable. With the

cereal grasses, such as barley and wheat,

the human-influenced varieties typically

came to average more grains per seed

head than their wild relatives had. The

rachis became less brittle in domesticated forms, making it easier for people to

harvest the grain with less loss because

the seed head no longer shattered to disperse its own seed. At the same time,

individual seed coats or husks (glumes)

became less tough, making them easier

for humans to process or digest. Many

of these changes obviously would have

been harmful to the plant under natural conditions. Frequently, a consequence

of domestication is that the plant species

becomes dependent on humans to disperse its seeds. After all, symbiosis is a

mutual, two-way relationship.

Whenever favorable plant traits developed, hunter-gatherers could be expected to respond to these improvements by

quickly adjusting their collecting behavior to take the greatest advantage, in

turn stimulating further genetic changes

in the subject plants and eventually producing a cultigen, or domesticate, under

human control. As continuous selection

and isolation from other plants of the

same species favored desirable genetic

variants, the steps to full domestication

would have been small ones.

Likewise, the distances that separated

hunter-gatherers from early farmers

were also small ones. It’s usually impossible to determine archaeologically when

harvesting activities may have expanded

to include the deliberate scattering of

selected wild seeds in new environments or the elimination of competing

plants by “weeding” or even burning

over a forest clearing. As they intensified

their focus on wheat and barley in the

Near East—or on species such as maize

or runner beans in Mexico—huntergatherers finally abandoned the rhythm

of their traditional food-collecting schedules and further committed themselves

to increasing the productivity of these

plants through cultivation.

Archaeological Evidence for Domestication and Agriculture

Archaeologist Graeme Barker (2006,

p. 414) argues that the change from

hunting and gathering to agriculture

“was the most profound revolution

in human history.” It also profoundly

affected other species and continues to

do so. The accumulated archaeological

evidence reveals that humans independently domesticated local species and

developed agriculture in several geographically separate regions relatively

soon after the Ice Age ended. From

recent applications of genetic research

and other methodological advances in

archaeology, it’s also clear that more

independent instances of prehistoric

domestication are likely to be identified,

some locations currently identified as

possible independent centers of domestication may be deleted from the list, and

some plants and animals were probably

domesticated multiple times in various

places (Armelagos and Harper, 2005).

Archaeologists have yet to fully

explain how and why agriculture

spread and eventually dominated economic life in many parts of the world.

Peter Bellwood’s (2005) recent “early farming dispersal hypothesis” integrates archaeology, genetics, and historical linguistics in a highly original argument that early farming grew out of the

Mesolithic/Epipaleolithic and spread

into new lands with the movement or

dispersal of farmers from agricultural

“homelands.” Working within what is

essentially an environmental approach

that identifies human population

growth as the main driving factor of

farmer dispersal, or “demic diffusion,”

this hypothesis offers a controversial

explanation for the spread of agriculture, human populations, and languages, as well as major spatial patterns

in human genetics (Bellwood, 2005;

Bellwood et al., 2007; see Diamond,

1999 for a very similar perspective).

Building on much the same archaeological and genetic data, Graeme Barker

(2006) reached different conclusions. He

essentially questions the existence

of such primal

hearths or homelands and traces

the roots of this

revolution back

to early Upper

Paleolithic huntergatherers. It is too

soon to tell which

of these interpretations offers a more

accurate picture of

the spread of prehistoric agriculture.

In examining

independent centers of domestication around the world, it’s important to

realize that the domestication of a local

species or two would not necessarily

trigger the enormous biocultural consequences we usually associate with the

Neolithic period in the Near East. In fact,

most altered species retained only local

significance. For example, in the Eastern

Woodlands of the United States, huntergatherers domesticated several smallseeded species very early; still, the wild

forest products obtained by hunting and

gathering retained their primary importance until relatively late prehistoric

times, when true farming developed in

the East.

In most regions, agriculture didn’t

develop fully until people were exploiting a mosaic of plants—and sometimes

animals, too—from different locations,

brought together in various combinations to meet such cultural requirements as nutrition, palatability, hardiness, yield, processing ease, and storage.

In the Near East, this threshold was

reached around 11,000 ya, when an agricultural complex consisting of wheat,

barley, sheep, and goats was widely and

rapidly adopted.

Plants

In most areas where agriculture

emerged, early farmers relied on local

plant species whose wild relatives grew

close by. Old World cereal grasses,

including barley and some wheat varieties, were native throughout the Near

East and perhaps into southeastern

Europe (Dennell, 1983). Wild varieties of these plants still flourish today

over parts of this range. Therefore, barley or wheat domestication could have

occurred anywhere in this region, possibly more than once. The same is true

for maize and beans in Mexico. So, as

we emphasized earlier, domestication

and agriculture were independently

invented in different regions around

the world.

As we noted earlier, it’s best to

explain these separate but parallel

processes from a combined cultural and

ecological perspective. We’ve already

seen that certain kinds of wild plants

were more likely than others to become

domesticated. Many of these species

tend to grow in regions where a very

long dry season follows a short wet period (Harlan, 1992). After the last Ice Age,

around 10,000 ya, these conditions existed around the Mediterranean basin and

the hilly areas of the Near East and in

the dry forests and savanna grasslands

of portions of sub-Saharan Africa, India,

southern California, southern Mexico,

and eastern and western South America.

Most scientific understanding of

ancient human plant use has come

from the archaeobotanical study of

preserved seeds, fruits, nutshell fragments, and other plant macrofossils like those shown in Figure 14-4 (Pearsall, 2000). It isn’t easy to preserve

seeds, tubers, leaves, and other delicate

organic materials for thousands of years.

Archaeologists recover some macrofossils from depositional environments that

are always dry, wet, or frozen, because

all of these conditions slow down or

halt the process of decomposition. Most,

however, are preserved because the

way they were harvested, threshed,

processed for consumption, or discarded

brought them into contact with enough

fire to char them, but not enough heat to

reduce them to ash. Once charred, macrofossils preserve well in many kinds

of archaeological sites and can often be

classified to genus, if not to species.

Macrofossils offer direct evidence

for important archaeological research,

such as reconstructing hunter- gatherer

plant use patterns, identifying farming locations, and determining the precise nature of harvested crops. They

also provide insights of other kinds.

The presence of perennial and biennial weed seeds in an ancient agricultural context may suggest that each year’s

farming activities only minimally disturbed the soil; the seed planter may

have used a digging stick, hoe, or simple scratch plow. If, on the other hand,

seeds of annual weeds predominate,

the farmer may have used a moldboard

plow—one that turns over the soil as it

cuts through it.

The major shortcomings of macrofossil-based interpretations of past

human plant use are due to potential

preservation biases. Most plant macrofossils tend to be preserved because they

were charred before entering the archaeological record (see Fig. 14-4 for examples). But not all plant parts char easily,

and many don’t char at all. For example,

it’s pretty easy to char a bean, but you’ll

be disappointed if you try the same

thing with a leaf of lettuce. Because the

necessary conditions could only be met

sometimes in prehistory and by some

plants and plant parts, archaeologists

are understandably concerned about the

validity and reliability of many reconstructions of human plant use that are

based only on macrofossils

Fortunately, modern archaeologists

can turn to several other important

sources in their research on prehistoric

human plant use. Plant

microfossils, such as

pollen, phytoliths, and

starch grains, often survive even where macrofossils can’t—for example, as residues on the

cutting edges of ancient

stone tools, inside pottery containers, embedded in the pores of

grinding stones, and

even trapped in the dental calculus that accumulates on human teeth

(Bryant, 2003; Henry

and Piperno, 2008).

Unlike many macrofossils, these remains preserve readily in a wide

range of archaeological contexts; they can be

classified as to the kind

of plant they represent,

if not also to the plant

part they represent; and

they can be archaeologically present even in

sites where macrofossils

were destroyed or never

deposited.

Pollen grains (Fig.

14-5) have been a valuable source of environmental and subsistence data for decades

(Traverse, 2007). Their

strengths are that they’re

abundant (as any hay fever sufferer can

tell you); the grains are taxonomically

distinctive and often can be classified to

genus, if not to species; the outer shell of

each grain is tough; and the wind-borne

dispersal of pollen from seed- producing

plants continues before, during, and

after humans occupy a particular

archaeological site. The main shortcoming of pollen grains is that they tend to

preserve poorly in many kinds of open

sites, depending on soil acidity, moisture, drainage, and weathering.

Phytoliths (Fig. 14-6) are microscopic,

inorganic structures that form in many

seed-producing plants as well as other

plants (Piperno, 2006). Like pollen, phytoliths are taxonomically distinctive.

They even vary according to where they form in the plant,

so phytoliths from

leaves can be distinguished from those

that formed in the

stems and seeds of

the same plant. They

are also affected by

different preservation biases than macrofossils (Cabanes

et al., 2011). Are the

plant remains at

your site unidentifiable, reduced to a

powdery ash, or simply not preserved?

Not a problem. Chances are the phytoliths from these plants are not only present in the archaeological deposit but also

recoverable and identifiable.

Starch grains (Fig. 14-7) are subcellular particles that form in all plant parts.

They are particularly abundant in such

economically important portions as

seeds and tubers (Coil et al., 2003). They

are a useful complement to phytoliths

as a data source and are a major tool

in archaeological investigations of root

crops (Piperno, 2008). Like pollen and

phytoliths, starch grains can be taxonomically classified, currently mostly to

family or genus. A good example of the

archaeological application of starch grain

analysis is the recent examination of the

surface of a grinding stone found on the

floor of one of the 23,000-year-old huts

at Ohalo II, in Israel. This study, which

was based on carefully sampled residues from cracks and pits in the working

surface of the grinding stone, enabled

archaeobotanists to identify that it was

a specialized implement used to grind

wild cereal grasses, including barley

(Piperno et al., 2004).

As we saw in Chapter 8, some plant

species also leave biochemical traces

in those who consume them. Because

temperate and tropical region plants

evolved with slightly different processes

for photosynthesis, their chemical compositions vary in the ratio of carbon-13

to carbon-12. This distinctive chemical

profile gets passed along the food chain,

and the bones of the human skeleton

may provide evidence of dietary change.

For example, their lower 13C levels reveal

that females at Grasshopper Pueblo, in

east-central Arizona, consumed mostly

the local plants they gathered, while

their male relatives at first enjoyed more

maize, a plant higher in 13C. Later, maize

became a staple in everyone’s diet at

Grasshopper, resulting in equivalent

carbon isotopes in males and females

(Ezzo, 1993).

Other biochemical analyses, using

different isotopes, have been devised

to assess overall diet—not necessarily

just the domesticated portions—from

individual skeletons. A higher ratio of

nitrogen-15 to nitrogen-14 (15N/14N), for

instance, corresponds to a greater seafood component to the diet (Schoeninger

et al., 1983), while a higher strontium-tocalcium (Sr/Ca) ratio indicates that plant

foods were of greater dietary importance than meat (Schoeninger, 1981).

Other chemicals taken up by bones may

inform us about ancient lifeways. For

example, lead (Pb) is a trace element

found in unusually high concentration in the bones of Romans who drank

wine stored in the lead containers typical of that period. The interplay among

culture, diet, and biology is, of course, a

prime example of biocultural evolution.

But to put it more simply, “You are what

you eat,” and the odds are increasingly

good that archaeologists can measure it.

Microfossil analyses complement and

greatly extend the valuable insights that

archaeobotanists have achieved through

the study of macrofossils. Recent key

advances include the growing field of

archaeogenetics, which applies the methods of molecular genetics to archaeological problems, as well as improvements in radiocarbon dating, which can

yield accurate age estimates from samples as small as 100 micrograms (μg)—

that’s one ten-thousandth of a gram, or

roughly one-fifth the weight of a grain of

rice (Armelagos and Harper, 2005). As a

result, researchers are now able to more

completely understand the prehistory of

the human use of plants and the beginnings of agriculture.

Animals

The process of animal domestication

differed from plant domestication, and

it probably varied even from one animal species to another. For example, the dog was one of the first domesticated

animals; mtDNA evidence suggests an

origin between 40,000 and 15,000 ya

(Savolainen, 2002), and dogs may even

have accompanied late Ice Age huntergatherers (Olsen, 1985). The dog’s relationship with humans was different

(and still is) from that of most subsequently domesticated animals. Often

valued less for its meat or hide, a

dog’s primary role was most likely as

a ferocious hunting weapon under at

least a bit of human control and direction. As people domesticated other animals, they changed the dog’s behavior even more for service as a herder

and later, in the Arctic and among the

Native Americans of the Great Plains,

as an occasional transporter of possessions. But the burial of a puppy with a

Natufian person who died some 12,000

ya in the Near East suggests that dogs

may have also earned a role as pets very

early (Davis and Valla, 1978).

Most other domesticated animals

were maintained solely for their meat at

first. Richard J. Harrison’s (1985) insightful analysis of faunal collections from

Neolithic sites in Spain and Portugal

concludes that meat remained the

primary product up until about 4,000 ya,

when subsequent changes in herd composition (age and sex ratios), slaughter patterns, and popularity of certain

breeds all point to new uses for some

livestock. Oxen pulled plows, horses carried people and things, cattle and goats

contributed milk products, and sheep

were raised for wool. Animal waste

became fertilizer in agricultural areas.

Leather, horn, and bone—and even

social status for the animals’ owners—

were other valued by-products.

Of course, animals are more mobile

than plants, and most of them are no less

mobile than the early people pursuing

them. So it’s unlikely that hunters could

have promoted useful genetic changes

in wild animals just by trying to restrict

their movements or by selective hunting alone. Possibly, by simultaneously

destroying wild predators and reducing the number of competing herbivores,

humans became surrogate protectors

of the herds, though this arrangement

would not have had the genetic impact

of actual domestication.

Since domestication is a process, not

an event, it’s nearly impossible to say

precisely when a plant or animal species has been domesticated. The process

involves much more than an indication

of “tameness” in the presence of humans.

More significant are the changes in

allele frequencies that result from selective breeding and isolation from wild

relatives. People may have started with

young animals spared by hunters or, in

the case of large and dangerous species

such as the auroch (the wild ancestor of

domesticated cattle), with individuals

that were exceptionally docile or small

(Fagan, 1993). Maintained in captivity,

these animals could be selectively bred

for desirable traits, such as more meat,

fat, wool, or strength. Once early farmers were consistently selecting breeding

stock according to certain criteria and

succeeding in perpetuating those characteristics through subsequent generations,

then domestication—that is, evolution—

clearly had begun.

Overall, not many mammal species

were ever domesticated. Those most

amenable to domestication are animals that form hierarchical herds, are

not likely to flee when frightened, and

are not strongly territorial (Diamond,

1989). In other words, animals that will

tolerate and transfer their allegiance

to human surrogates make the best

potential domesticates. Several large

Eurasian mammals met these specifications, and cultures of Asia, Europe, and

Africa came to rely on sheep and goats,

pigs, cattle, and horses (listed here in

approximately their order of domestication) as well as water buffalo, camels, reindeer, and a few other regionally

significant species.

Even fewer New World herd animals were capable of being domesticated. Aside from two South American

camelids—the llama and the alpaca—no

large American mammal was brought

fully under human control (Fig. 14-8).

Dogs had probably accompanied the

first people into the New World. None

of these animals were suitable for transporting or pulling heavy loads—llamas

balk at carrying more than about 100

pounds, and Plains Indian dogs dragged

only small bundles—so the people of the

New World continued to carry their own burdens, till their fields by hand, and

hunt and fight on foot until the introduction of the Old World’s livestock in

the 1500s.

Archaeological evidence of nonhuman animal domestication is subtle and

difficult to assess from the bones themselves (Fig. 14-9). Archaeozoologists,

who study the skeletal remains of nonhuman fauna from archaeological sites,

have also shown that

many of the morphological changes long

viewed as good proxy

measures of domestication (for example,

smaller adult size, horn

shape) actually follow,

by as much as thousands of years, other

archaeological measures

of domestication in the

Near East, such as distinctive demographic

patterns typical of managed herds (Zeder, 2008).

For example, at Ganj

Dareh, in Iran, around

9,900 ya, villagers

slaughtered a high proportion of young male

goats, but adult females survived long

after reaching reproductive maturity.

This culling pattern is typical of herded

animals (Zeder, 2008). If these villagers

were hunting wild goats, the demographic pattern evident in the goat bones

would have been different, emphasizing

large adults, mostly males.

Among important recent developments, DNA analyses of modern animals and ancient animal bones found in

archaeological sites are greatly enhancing our understanding of the domestication of individual animal species

as well as the development of farming

(Larson, 2011). Although not without

their own methodological and interpretive problems, population genetic analyses, coupled with new morphometric

analyses and recent advances in smallsample radiocarbon dating, hold considerable promise for answering some

of the big questions about the origins of

domestication.

Old World Farmers

As we’ve noted, independent invention

accounts best for the diversity of domesticates and the distinctiveness of Neolithic

lifeways around the world. What’s more,

many wild species, such as wheat and

sheep, probably occupied more extensive natural ranges in the early Holocene

and may in fact have undergone local

domestication more than a few times

(Armelagos and Harper, 2005).

But as Bellwood (2005) and Diamond

(1999) remind us, we can’t entirely discount the spread of Neolithic lifeways

or people from place to place. As we’ll

see, in at least some areas—southeastern

Europe, for example—colonizing farmers appear to have brought their domesticates and their culture with them as they

migrated into new territories in search

of suitable farmland. Neolithic practices

also spread through secondary contact

as people on the margins of established

farming societies acquired certain tools,

seeds, and ideas and passed them along

to cultures still further removed. Seeds

and animals must have become commodities in prehistoric exchange networks, just like the Spanish horses

obtained by Native Americans did in the

sixteenth century. It’s important to bear

in mind that each archaeological event is

unique in its own way. “One-size-fits-all”

explanations are rarely adequate, even if the results are the same—in this case,

the expansion of Neolithic lifeways. With

that in mind, let’s consider a sampling

of Neolithic societies from around the

world to get some idea of the variations

on this common theme.

The Near East

As we saw in Chapter 13, Epipaleolithic

foraging cultures, such as Kebaran and

Natufian, apparently took the first steps,

though perhaps inadvertently, toward

agriculture in the Near East (Fig. 14-10).

Many of the archaeologically documented changes among Epipaleolithic huntergatherers in the Levant offer important

support for Flannery’s (1973) conception

of a broad-spectrum revolution that preceded the development of domestication

and agriculture.

For example, the extraordinary preservation of plant remains at Ohalo II, an

early Epipaleolithic campsite in northern

Israel, shows that small-grained grass

and wild cereal seeds were important

in hunter-gatherer diets in the Levant

by 23,000 ya (see p. 352; Piperno et al.,

2004; Weiss et al., 2004a, 2004b). Faunal

remains from eight other Epipaleolithic

sites in the Levant, all of which date

between 18,000 and 12,000 ya, also show

a pattern of increased diet breadth as the

abundance of big game animals declined

and hunters depended more and more

on smaller game, such as gazelles (Stutz

et al., 2009).

The site of Abu Hureyra, in the

upper Euphrates valley of Syria, is

of particular archaeological interest

because its occupation spans the

Younger Dryas (roughly 13,000–11,500

ya), a climatic shift to cooler and drier

conditions that David Henry (1989)

argues was an important environmental factor in the development of agriculture in the Levant. The Abu Hureyra

hunter-gatherers consumed more than

250 plant species, only a few of which

were staple foods, at the onset of the

Younger Dryas (Moore et al., 2000,

p. 397). As the climate changed, many

of these species vanished from the Abu

Hureyra archaeological record, to be

replaced by increased frequencies of

weed seeds and possible evidence

of early domesticated rye, specimens of

which date to roughly 11,200–10,600 ya.

Moore and colleagues (2010) infer

that changing environmental conditions were sufficient to account for

these shifts in Abu Hureyra’s subsistence economy and that this settlement depended at least partly on the

cultivation of domesticated plants (rye,

in this case). While the identification

of domesticated rye at Abu Hureyra

remains a controversial claim (see

Colledge and Conolly, 2010, pp. 135–

136), the subsistence economy clearly

reflects increased diet breadth as

higher-ranked foods became less available in the region around Abu Hureyra

(Colledge and Conolly, 2010).

Evidence of cultivation, settled communities, and food storage become

more common after the end of the

Younger Dryas and the return of a

slightly more moderate climate. At

Dhra’, an 11,000-year-old village site

near the Dead Sea in Jordan, archaeologists recently uncovered the remains

of mud granaries and other food storage features, as well as large quantities

of wild barley and oat grains (Kuijt and

Finlayson, 2009). The village economy

at Dhra’ clearly depended on the cultivation and storage of wild plants, and

many features traditionally associated

with sedentary Neolithic farmers are

seen at Dhra’.

Food collectors and the earliest farmers established the first permanent sedentary communities in the Near East.

Drawn by an ever-flowing spring in an

otherwise arid region, settlers at the

site of Jericho (or Tell es-Sultan), in the

West Bank, and at other Natufian sites

in Israel built their stone or mud-brick

round houses some 11,500 ya. Though

they were made of more substantial materials, in form these structures

closely resembled the temporary huts

of the region’s earlier hunters and gatherers. Numerous grinding stones and

clay-lined storage pits found in these

communities testify to the economic

importance of cereal grains (Moore,

1985; Bar-Yosef, 1987).

Finally, around 10,000 ya at Kebara

and El Wad, both in Israel, stone-bladed

sickles aided the harvest of wild wheat

and barley (Henry, 1989). This technology, more efficient than plucking seeds

by hand, netted greater yields during

a short harvest period. The process of

genetic selection that we’ve considered

as the basis for plant domestication must

have been well under way by this time.

Foraging for the seeds of wild cereals,

fruits, nuts, and the meat of wild game

long remained an important, but slowly

declining, strategy. By 10,000 ya, managed sheep and goat populations were

present in the Levant (Munro, 2009). At

Abu Hureyra, villagers were cultivating

wheat, rye, and lentils by 9,800 ya, but

wild plant food staples were still a part

of the diet (Moore et al., 2000). By 9,000

ya, sedentary villagers across a broad

arc from the Red Sea to western Iran—

also known as the Fertile Crescent (see

Fig. 14-10)—engaged in wheat and barley

agriculture and sheep and goat herding.

As demonstrated at many sites in this

region, Neolithic families lived in adjacent multiroom rectangular houses, in

contrast to the compounds of individual

small, round shelters commonly built by

Epipaleolithic collectors as well as the

early Jericho settlers.

Africa

Tracing Africa’s Neolithic past is challenging, considering the continent’s vast

size, its varied climates and vegetation

zones, and the extent to which many

of its regions are still archaeologically

unknown. What’s more, because many

tropical foods lack woody stems or durable seeds, plant macrofossils often are

poorly represented at African archaeological sites. Recent studies based on the

analysis of plant microfossils and genetic

patterns are helping to construct fresh

perspectives on the origins, development, and impacts of plant and animal

domestication throughout the African

continent.

Northern Africa Archaeologists working in the Nile Valley have found sickles

and milling stones relating to early

wild grain harvesting (Wendorf and

Schild, 1989). Qadan culture sites near

present-day Aswan (Fig. 14-11) probably

typified the Epipaleolithic food collectors who occupied the valley around

8,000 ya (Hoffman, 1991). Qadan people

employed spears or nets for taking large

Nile perch and catfish. Along the riverbanks they hunted wildfowl and gathered wild produce, processing starchy

aquatic tubers on their milling stones.

They also stalked the adjacent grasslands for gazelle and other game and

may have begun the process of domesticating the local wild cattle (Wendorf and

Schild, 1994; but see Gifford-Gonzalez

and Hanotte, 2011, p. 6). Considering the

wealth of naturally occurring resources

along the Nile, this foraging and collecting way of life might have continued

indefinitely. So why did farming develop

there at all?

Part of the answer rests with shifting rainfall patterns across North Africa

since the Late Pleistocene. Long-term

cycles brought increased precipitation,

which broadened the Nile and its valley and gave the river a predictable seasonal rhythm. Rains falling on its tropical headwaters, thousands of miles to the

south, caused the river to overflow its

downstream channels by late summer,

flooding the low-lying basins of northern Egypt for about three months of the

year. Although desiccation followed, the

flood-deposited silt grew lush with wild

grasses through the following season.

Periodically, however, extended drought

episodes intervened to narrow the river’s

life-giving flow.

West of the Nile, the fragile arid environment of the Sahara was particularly

susceptible to these fluctuations. It was

always marginal for humans, and down

to 11,000 ya, it was uninviting even

to hunter-gatherers. Then a period of

increased rainfall created shallow lakes

and streams that nurtured the Saharan

grasslands, attracting game animals and

humans. Around 7,000 ya, people in the

Sahara devised a strategy of nomadic

pastoralism, allowing their herds of

sheep, goats, and possibly cattle to act as

ecological intermediaries by converting

tough grasses to meat and by-products

useful to humans (Wendorf and Schild,

1994). Soon after, around 6,000 ya, further deterioration of the region’s climate—and possibly overgrazing—

forced the herders and their animals to

seek greener pastures closer to the Nile

and also to the south beyond the Sahara

(Williams, 1984; A. Smith, 1992; Kuper

and Kröpelin, 2006).

As drought parched the adjacent

areas, the Nile Valley attracted more settlers. Wild resources were then insufficient to feed the growing sedentary

population, and even the local domesticates that had been casually cultivated

on the floodplain gave way to more productive cereals—the domesticated barley

and wheat that had been brought under

human control elsewhere by people like

the Natufians.

Farmers gradually made the river’s

rhythm their own. Communities of

reed-mat or mud-brick houses appeared

across the Nile delta and along its banks.

Basket-lined storage pits or granaries,

milling stones, and sickles indicate a

heavy reliance on grain. Pottery vessels of river clay, linen woven from flax

fibers, flint tools, and occasional hammered copper items were produced

locally. These ordinary Neolithic beginnings laid the foundation for the remarkable Egyptian civilization, which we’ll

explore in Chapter 15.

Sub-Saharan Africa In West Africa

along the southern edge of the Sahara,

where little is currently known about the

early Holocene human presence, the pattern appears similar to that described for

the Sahara. At Ounjougou, in the Dogon

Plateau region of central Mali, erosional

gullies exposed a long sequence of

Pleistocene and early Holocene sites

(Huysecom et al., 2004). Excavations

reveal that hunter-gatherers lived in this region from the earliest Holocene,

around 12,000–11,000 ya. By 10,000–9,000

ya, descendants of these groups were

harvesting wild cereal grasses and making and using ceramics.

The major Near Eastern cereal crops

did not grow well in sub-Saharan Africa

or in its tropical regions. Between 5,000

and 3,000 ya, African farmers developed comparable domesticates from

local cereal grasses, including varieties of millet and sorghum (Fig. 14-12;

Smith, 1999). Pearl millet, an important food grain in parts of Africa and

India, was domesticated in Mali around

4,500 ya and was soon found across

sub-Saharan Africa. Manning and colleagues (2011) speculate that pearl millet may have spread rapidly because it

could grow even where there wasn’t

much water and it could be easily cultivated by mobile pastoralists. African

crops such as pearl millet, finger millet,

and sorghum found their way across the

Arabian Sea to South Asia more than

3,000 ya (Fuller et al., 2011), where they

are still raised today.

Hunters and gatherers in other parts

of Africa also experimented with local

cultivars. For example, mobile foragers and semisedentary fishers of tropical Africa practiced yam horticulture in

clearings and along riverbanks by 7,000–

6,000 ya (Ehret, 1984; Arnau et al, 2010).

With digging sticks, they pried out the

starchy tubers and carried them away

for cooking. As an added bonus, the

people discovered that if they pressed

the leafy tops or cuttings of the largest roots into the soil at the edge of the

camp clearing, the yams would regenerate into an informal garden. The domestication process for the yam, a tuber that

propagates vegetatively (for example, by

cuttings rather than by seeds), may have

taken far longer, possibly millennia, than

for the domestication of cereal grains

such as wheat.

More dramatic shifts in sub-Saharan

subsistence followed the introduction

and spread of several Neolithic domesticated plants and animals from South

and Southeast Asia across the Indian

Ocean. Among these economically

important plants, all of which spread

quickly throughout tropical Africa,

were bananas (or plantains) and two

root crops, taro and the greater yam

(Fuller et al., 2011, p. 550). The banana,

a food crop that is now of global importance, arrived in Africa from Southeast

Asia, possibly by 2,500 ya. Based on linguistic evidence, Blench (2009) argues

that plantains, taro, and the greater

yams were part of the Southeast Asian

“crop package,” which reached the

shores of Africa and quickly spread

throughout the interior.

Outside the Nile Valley, cattle herding took priority over farming in much

of East Africa, where conditions were

generally not suitable for cultivation.

Taurine cattle (Bos taurus) were first

domesticated in the Near East more

than 9,000 ya and by 8,000 ya could be

found on the savannas that then existed

in parts of the Sahara (Gifford-Gonzalez

and Hanotte, 2011). Genetic evidence also suggests a possible independent domestication of African taurine cattle (Bradley

and Magee, 2006). East African rock

paintings show that hump-backed zebu

(or indicine) cattle (Bos indicus), a South

Asian domesticate, reached Africa by

2,500 ya. The resulting African taurinezebu hybrid played a major role in the

success of East African cattle pastoralists

(Fuller et al., 2011).

Bantu-speaking peoples, native to

west-central Africa, relied on these and

other domesticated plants and animals

to support their rapid expansion through

central and southern Africa (Phillipson,

1984). Driving herds of domestic goats

and cattle and acquiring the technology

of ironworking as they moved southeastward through central Africa (Van

Noten and Raymaekers, 1987), the Bantu

easily overwhelmed most hunting and

gathering groups. The conventional

view is that with iron tools and weapons, they carved out gardens and maintained large semipermanent villages,

and today, their numerous descendants

live in eastern, southern, and southwestern Africa (see Fig. 14-11). There’s at

least some evidence that food- producing

methods spread into parts of southern

Africa before the Bantu. The bones of

domesticated sheep found in Late Stone

Age sites in South Africa may not be the

result of the spread of pastoralists, as

once widely believed, but the remains of

the camps of Bushmen (also called the

San), whom Karim Sadr (2003) describes

as “hunters-with-sheep.”

Asia

Several centers of domestication in

southern and eastern Asia gave rise

to separate Neolithic traditions based

on the propagation of productive local

plant and animal species. The exploitation of these resources spread widely

and heralded further economic and

social changes associated with the rise of

early civilizations in these regions (see

Chapter 15).

South Asia Excavations at Mehrgarh,

in central Pakistan, have illuminated

Neolithic beginnings on the Indian

subcontinent (Jarrige and Meadow,

1980; Fuller, 2006). Located at the edge

of a high plain west of the broad Indus

Valley (Fig. 14-13), the site’s

lower levels, dating between

8,000 and 6,000 ya, reveal the

trend toward dietary specialization that accompanied the

domestication of local plant and

animal species. Early on, the

people harvested both wild and

domesticated varieties of barley

and wheat, among other native

plants. Mehrgarh’s archaeological deposits also include bones

of many local herbivores: water

buffalo, gazelle, swamp deer,

goats, sheep, pigs, cattle, and

even elephants. By 6,000 ya, the

cultivated cereals prevailed,

along with just three animal

species—domestic sheep,

goats, and cattle. Researchers

believe that this early Neolithic

phase at Mehrgarh represents

a transition from seminomadic

herding to a more sedentary existence

that became the basis for later urban

development in the Indus Valley. Other

planned settlements boasting multiroom

mud-brick dwellings and granaries

soon appeared in the region, supported

by a productive agriculture and bustling trade in copper, turquoise, shells,

and cotton.

Rice is the staple crop of much of

South and East Asia. The earliest evidence of rice cultivation in India is

reported from the Lahuradewa site and

adjacent lake deposits in the Ganges

Valley, where cultivated rice phytoliths

and husks have been found in contexts

dated to around 8,360 ya (Saxena et al.,

2006). Domesticated rice spread from the

Ganges into South India after 3,000 ya,

possibly after the emergence of irrigation systems such as paddy-field cultivation (Fuller and Qin, 2009). The relationship between early South Asian

domesticated rice and that which was

domesticated by Chinese farmers in

the lower Yangtze Valley (see next section) is both unclear and an active area

of research.

Archaeologists know much less

about the origins of agriculture in South

India. Recent archaeobotanical analyses of samples taken from southern Neolithic, or Ash

Mound Tradition,

sites in Karnataka

and Andhra Pradesh

suggest that the earliest agriculture in

these regions dates

between 5,000 and

4,000 ya and was

based on several

native domesticated

species, principally

two lentils (horsegram and mung

bean) and two species of millet (browntop millet and bristly

foxtail grass), along

with nonnative crops

including wheat and

barley (Fuller et al.,

2004). The high frequencies of native

domesticates in these

samples lend support

to Vavilov’s (1992)

identification of India as a possible independent center of domestication.

China By 10,000 ya, hunter-gatherers

and East Asia’s earliest farmers were

already cultivating a crop in the Yellow

River basin that became a staple food

in the Neolithic villages and towns

of northern China. It may surprise

you to know that this crop was millet, not rice. The earliest firm evidence

of millet cultivation comes from the

early Neolithic site of Cishan, in Hebei

Province (Fig. 14-14), which has yielded

caches of common (or broomcorn) millet

in storage pits that date between 10,300

and 8,700 ya (Lu et al., 2009). Cishan was

part of a regional development of millet

farming that began with hunter- gatherer

cultivation in several places across

northern China. The deep stratigraphic

sequence at the Dadiwan site in Gansu

Province (Fig. 14-14) shows that the origins of millet farming can be traced to

the broad-spectrum resource strategy

of Late Pleistocene hunter-gatherers

in northern China (Barton et al., 2009;

Bettinger et al., 2010).

During the Yangshao period (about

7,000–5,000 ya), millet was a staple of

both humans and their domesticated